Apps & Software

Latest about Apps & Software

-

-

No one wanted thisGoogle just gave me the best reason ever to uninstall ChromeBy Nickolas Diaz Published

No one wanted thisGoogle just gave me the best reason ever to uninstall ChromeBy Nickolas Diaz Published -

AI, but with linksGoogle's AI Search is finally doing a better job of linking to the webBy Sanuj Bhatia Published

AI, but with linksGoogle's AI Search is finally doing a better job of linking to the webBy Sanuj Bhatia Published -

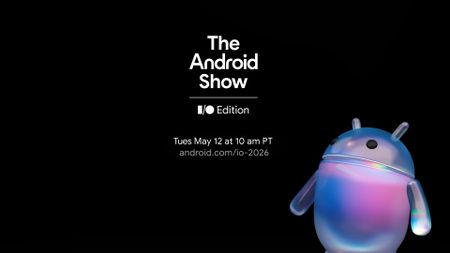

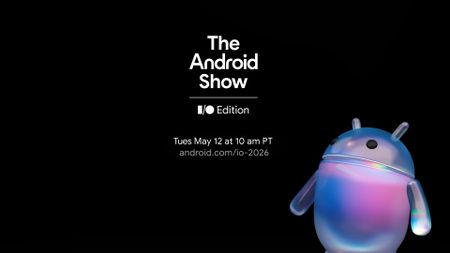

Head to the ShowMajor hype: Google's Android Show premieres May 12 to glimpse the futureBy Nickolas Diaz Last updated

Head to the ShowMajor hype: Google's Android Show premieres May 12 to glimpse the futureBy Nickolas Diaz Last updated -

On the wayIt's happening: Apple's iOS 26.5 prepares RCS encryption with AndroidBy Nickolas Diaz Published

On the wayIt's happening: Apple's iOS 26.5 prepares RCS encryption with AndroidBy Nickolas Diaz Published -

That explains itGoogle finally explains why the AICore app is eating up your storageBy Sanuj Bhatia Published

That explains itGoogle finally explains why the AICore app is eating up your storageBy Sanuj Bhatia Published -

The paywall is downYou no longer have to pay for Gemini’s smartest organization toolBy Jay Bonggolto Published

The paywall is downYou no longer have to pay for Gemini’s smartest organization toolBy Jay Bonggolto Published -

You like ads?Google flips the script, reportedly considers putting ads in Gemini appBy Nickolas Diaz Published

You like ads?Google flips the script, reportedly considers putting ads in Gemini appBy Nickolas Diaz Published

-

Explore Apps & Software

AI

-

-

No one wanted thisGoogle just gave me the best reason ever to uninstall ChromeBy Nickolas Diaz Published

No one wanted thisGoogle just gave me the best reason ever to uninstall ChromeBy Nickolas Diaz Published -

You like ads?Google flips the script, reportedly considers putting ads in Gemini appBy Nickolas Diaz Published

You like ads?Google flips the script, reportedly considers putting ads in Gemini appBy Nickolas Diaz Published -

Moto AIMotorola Moto AI: Features, availability, settings, and moreBy Derrek Lee Last updated

Moto AIMotorola Moto AI: Features, availability, settings, and moreBy Derrek Lee Last updated -

Busy AINow, Gemini might get proactive with timely, personalized 'suggestions'By Nickolas Diaz Published

Busy AINow, Gemini might get proactive with timely, personalized 'suggestions'By Nickolas Diaz Published -

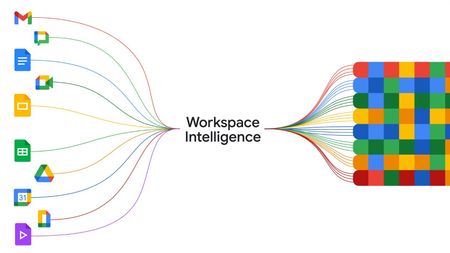

Automated AI futureWorkspace Intelligence is Google's agentic AI era for true assistance with GeminiBy Nickolas Diaz Published

Automated AI futureWorkspace Intelligence is Google's agentic AI era for true assistance with GeminiBy Nickolas Diaz Published -

The right priceGoogle builds on its hotel price tracking for Android with a summer updateBy Nickolas Diaz Published

The right priceGoogle builds on its hotel price tracking for Android with a summer updateBy Nickolas Diaz Published -

It's all about youEasy as pie: Google's Gemini uses your memories for AI photos that feel personalBy Nickolas Diaz Published

It's all about youEasy as pie: Google's Gemini uses your memories for AI photos that feel personalBy Nickolas Diaz Published -

Welcome, macOSMac, meet Gemini: Google's AI gives Apple's macOS a 'native' experience for sharing and moreBy Nickolas Diaz Published

Welcome, macOSMac, meet Gemini: Google's AI gives Apple's macOS a 'native' experience for sharing and moreBy Nickolas Diaz Published -

New look, who disTom's Guide just got a massive upgrade, and it's making it easy to find the tech you're looking forBy Derrek Lee Published

New look, who disTom's Guide just got a massive upgrade, and it's making it easy to find the tech you're looking forBy Derrek Lee Published

-

Android Auto

-

-

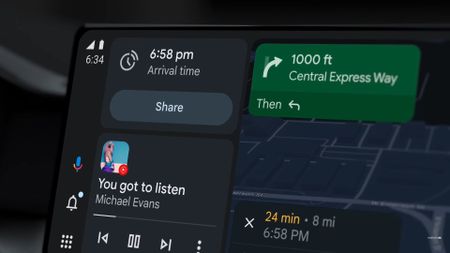

Smarter driving5 Android Auto settings I always change on any new Android phoneBy Roydon Cerejo Published

Smarter driving5 Android Auto settings I always change on any new Android phoneBy Roydon Cerejo Published -

Android Auto bug is making the signal icon vanish for some usersBy Sanuj Bhatia Published

Android Auto bug is making the signal icon vanish for some usersBy Sanuj Bhatia Published -

Finally fixedAndroid's Driving Mode is finally smarter about when it turns onBy Sanuj Bhatia Published

Finally fixedAndroid's Driving Mode is finally smarter about when it turns onBy Sanuj Bhatia Published -

Where'd it go?Something's missing: Android Auto users report a jarring bug in Google MapsBy Nickolas Diaz Published

Where'd it go?Something's missing: Android Auto users report a jarring bug in Google MapsBy Nickolas Diaz Published -

It might finally happenAndroid Auto may finally let you cast media from your phoneBy Sanuj Bhatia Published

It might finally happenAndroid Auto may finally let you cast media from your phoneBy Sanuj Bhatia Published -

Gemini-fiedGemini transforms Android Auto with new AI features for a smarter driveBy Nandika Ravi Published

Gemini-fiedGemini transforms Android Auto with new AI features for a smarter driveBy Nandika Ravi Published -

Bye, Assistant!Gemini for Android Auto is starting to replace Google AssistantBy Brady Snyder Published

Bye, Assistant!Gemini for Android Auto is starting to replace Google AssistantBy Brady Snyder Published -

Eyes on the RoadGoogle starts quietly rolling out an essential button on Android AutoBy Nickolas Diaz Published

Eyes on the RoadGoogle starts quietly rolling out an essential button on Android AutoBy Nickolas Diaz Published -

game overGoogle may be pulling the plug on Android Auto’s in-car mini-gamesBy Jay Bonggolto Published

game overGoogle may be pulling the plug on Android Auto’s in-car mini-gamesBy Jay Bonggolto Published

-

Android OS

-

-

Head to the ShowMajor hype: Google's Android Show premieres May 12 to glimpse the futureBy Nickolas Diaz Last updated

Head to the ShowMajor hype: Google's Android Show premieres May 12 to glimpse the futureBy Nickolas Diaz Last updated -

On the wayIt's happening: Apple's iOS 26.5 prepares RCS encryption with AndroidBy Nickolas Diaz Published

On the wayIt's happening: Apple's iOS 26.5 prepares RCS encryption with AndroidBy Nickolas Diaz Published -

QPR testing startsAndroid 17 isn't out yet, but Google is already testing its first big updateBy Sanuj Bhatia Published

QPR testing startsAndroid 17 isn't out yet, but Google is already testing its first big updateBy Sanuj Bhatia Published -

One step closerAndroid 17 hits a major milestone as Google releases its last 'scheduled' betaBy Sanuj Bhatia Published

One step closerAndroid 17 hits a major milestone as Google releases its last 'scheduled' betaBy Sanuj Bhatia Published -

Tap, tap, overlapTap n' go: Android's rumored 'Tap to Share' UI might've just broken coverBy Nickolas Diaz Published

Tap, tap, overlapTap n' go: Android's rumored 'Tap to Share' UI might've just broken coverBy Nickolas Diaz Published -

AC PollsWe asked, you answered: Android users pick between gestures and 3-button navigation, and the top choice might surprise youBy Derrek Lee Published

AC PollsWe asked, you answered: Android users pick between gestures and 3-button navigation, and the top choice might surprise youBy Derrek Lee Published -

Payout beginsGoogle's $135M Android data settlement is getting closer, and you can now set your payout methodBy Sanuj Bhatia Published

Payout beginsGoogle's $135M Android data settlement is getting closer, and you can now set your payout methodBy Sanuj Bhatia Published -

How ToI always add these 6 quick settings tiles to my Android phone when setting it up for the first timeBy Namerah Saud Fatmi Published

How ToI always add these 6 quick settings tiles to my Android phone when setting it up for the first timeBy Namerah Saud Fatmi Published -

Multitasking is finally realAndroid 17 Beta 3 finally brings the desktop multitasking we’ve been waiting forBy Jay Bonggolto Published

Multitasking is finally realAndroid 17 Beta 3 finally brings the desktop multitasking we’ve been waiting forBy Jay Bonggolto Published

-

Gmail

-

-

New address, same inboxI changed my embarrassing Gmail username without losing anything, and you can tooBy Sanuj Bhatia Published

New address, same inboxI changed my embarrassing Gmail username without losing anything, and you can tooBy Sanuj Bhatia Published -

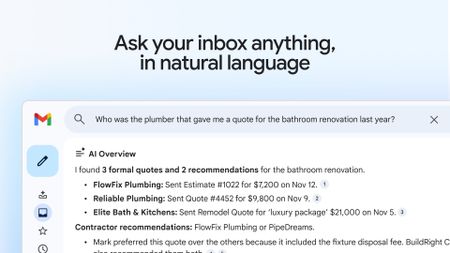

That's a steep priceGmail's new AI Inbox is here, but it'll cost you $250 a monthBy Sanuj Bhatia Published

That's a steep priceGmail's new AI Inbox is here, but it'll cost you $250 a monthBy Sanuj Bhatia Published -

Gemini takes overGmail is getting a new AI inbox as Google brings Gemini front and centerBy Sanuj Bhatia Published

Gemini takes overGmail is getting a new AI inbox as Google brings Gemini front and centerBy Sanuj Bhatia Published -

A long-awaited featureGmail might finally let you switch to a new address without starting overBy Sanuj Bhatia Published

A long-awaited featureGmail might finally let you switch to a new address without starting overBy Sanuj Bhatia Published -

Get a previewGmail gives Android users a window into email attachments with this updateBy Nickolas Diaz Published

Get a previewGmail gives Android users a window into email attachments with this updateBy Nickolas Diaz Published -

Sleigh Bells ring...Google brings a unified 'Purchases' tab to Gmail ahead of the holiday rushBy Nickolas Diaz Published

Sleigh Bells ring...Google brings a unified 'Purchases' tab to Gmail ahead of the holiday rushBy Nickolas Diaz Published -

new UX styleGmail's new Material 3 Expressive design is secretly hitting some inboxesBy Jay Bonggolto Published

new UX styleGmail's new Material 3 Expressive design is secretly hitting some inboxesBy Jay Bonggolto Published -

Quick replyGmail will now let you react to emails with emojisBy Brady Snyder Published

Quick replyGmail will now let you react to emails with emojisBy Brady Snyder Published -

Mail upgradesGmail's new search results prioritize relevant emails over recent onesBy Brady Snyder Published

Mail upgradesGmail's new search results prioritize relevant emails over recent onesBy Brady Snyder Published

-

Google Assistant

-

-

Bye, AssistantGoogle Assistant could shut down for Android Auto in March 2026By Brady Snyder Published

Bye, AssistantGoogle Assistant could shut down for Android Auto in March 2026By Brady Snyder Published -

New look!Google's song search evolves with a modern Gemini-inspired UI on AndroidBy Nandika Ravi Published

New look!Google's song search evolves with a modern Gemini-inspired UI on AndroidBy Nandika Ravi Published -

New look!Google's voice and song search gets a major overhaul on Android after yearsBy Nandika Ravi Published

New look!Google's voice and song search gets a major overhaul on Android after yearsBy Nandika Ravi Published -

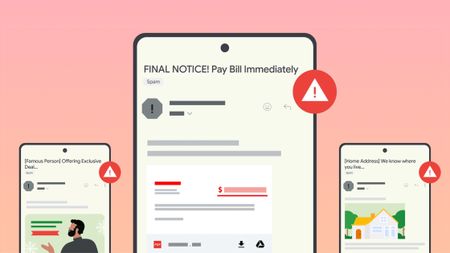

Stay in the knowGoogle introduces new tools to help users fight against evolving phishing scams effectivelyBy Nandika Ravi Published

Stay in the knowGoogle introduces new tools to help users fight against evolving phishing scams effectivelyBy Nandika Ravi Published -

Google OutageGoogle, Gmail, and Meet hit by widespread outage, causing login issuesBy Nandika Ravi Published

Google OutageGoogle, Gmail, and Meet hit by widespread outage, causing login issuesBy Nandika Ravi Published -

New voicesGoogle is spicing up its voice list on Search, according to a new leakBy Nandika Ravi Published

New voicesGoogle is spicing up its voice list on Search, according to a new leakBy Nandika Ravi Published -

New Google AI plansNew Google AI Pro and $249/month Ultra subscription announced at I/OBy Vishnu Sarangapurkar Published

New Google AI plansNew Google AI Pro and $249/month Ultra subscription announced at I/OBy Vishnu Sarangapurkar Published -

Easy-peesyGoogle app on iOS gets a new feature that will 'Simplify' text onlineBy Nandika Ravi Published

Easy-peesyGoogle app on iOS gets a new feature that will 'Simplify' text onlineBy Nandika Ravi Published -

ByeGoogle officially killed Driving Mode after stripping most of its features in 2024By Brady Snyder Published

ByeGoogle officially killed Driving Mode after stripping most of its features in 2024By Brady Snyder Published

-

Google Maps

-

-

Don't stressGet in: Android Auto EVs see AI battery predictions in Google Maps for stress-free plansBy Nickolas Diaz Published

Don't stressGet in: Android Auto EVs see AI battery predictions in Google Maps for stress-free plansBy Nickolas Diaz Published -

It's just like new'Immersive Navigation' in Google Maps is like opening a new app for the modern era of drivingBy Nickolas Diaz Published

It's just like new'Immersive Navigation' in Google Maps is like opening a new app for the modern era of drivingBy Nickolas Diaz Published -

Limiting features?Google Maps might keep things from you if you don't sign in to an accountBy Nickolas Diaz Published

Limiting features?Google Maps might keep things from you if you don't sign in to an accountBy Nickolas Diaz Published -

Test runGoogle Maps might get a trial space for new features, and 'Ask Maps' could headlineBy Nickolas Diaz Published

Test runGoogle Maps might get a trial space for new features, and 'Ask Maps' could headlineBy Nickolas Diaz Published -

Out on a walkMy walks just got a lot better, as Google says 'Gemini in Navigation' supports moreBy Nickolas Diaz Published

Out on a walkMy walks just got a lot better, as Google says 'Gemini in Navigation' supports moreBy Nickolas Diaz Published -

Better battery lifeHow to enable and use Google Maps power saving modeBy Brady Snyder Published

Better battery lifeHow to enable and use Google Maps power saving modeBy Brady Snyder Published -

Let's go thereGoogle Maps gets a major upgrade with Gemini for smooth navigation on Android and iOSBy Nickolas Diaz Published

Let's go thereGoogle Maps gets a major upgrade with Gemini for smooth navigation on Android and iOSBy Nickolas Diaz Published -

Let's go thereGoogle Maps gets a Gemini boost to help you navigate the roads like a proBy Nickolas Diaz Published

Let's go thereGoogle Maps gets a Gemini boost to help you navigate the roads like a proBy Nickolas Diaz Published -

Double RainbowHere's what the redesigned Google Photos and Maps icons look likeBy Nickolas Diaz Published

Double RainbowHere's what the redesigned Google Photos and Maps icons look likeBy Nickolas Diaz Published

-

Google Pay

-

-

How ToThis is the best Google Pay feature you're not using in IndiaBy Harish Jonnalagadda Published

How ToThis is the best Google Pay feature you're not using in IndiaBy Harish Jonnalagadda Published -

How ToI used this hidden Google Pay feature to automate credit card bill paymentsBy Harish Jonnalagadda Published

How ToI used this hidden Google Pay feature to automate credit card bill paymentsBy Harish Jonnalagadda Published -

No more drainAndroid’s next update is finally addressing your phone’s biggest battery hogsBy Jay Bonggolto Published

No more drainAndroid’s next update is finally addressing your phone’s biggest battery hogsBy Jay Bonggolto Published -

On TimeGoogle Wallet is helping Android users effortlessly catch their plane or trainBy Nickolas Diaz Published

On TimeGoogle Wallet is helping Android users effortlessly catch their plane or trainBy Nickolas Diaz Published -

Quick TapsGoogle Pay's fresh updates will unlock better shopping rewards for Chrome usersBy Nickolas Diaz Published

Quick TapsGoogle Pay's fresh updates will unlock better shopping rewards for Chrome usersBy Nickolas Diaz Published -

Pay Your WayAndroid users get another option to pay later with Klarna on Google PayBy Nickolas Diaz Published

Pay Your WayAndroid users get another option to pay later with Klarna on Google PayBy Nickolas Diaz Published -

Easier accessGoogle Wallet brings digital ID support to UK, more US statesBy Nandika Ravi Published

Easier accessGoogle Wallet brings digital ID support to UK, more US statesBy Nandika Ravi Published -

Now arriving at...Google Wallet brings real-time train status alerts to Android, and teases I/O 2025By Nickolas Diaz Published

Now arriving at...Google Wallet brings real-time train status alerts to Android, and teases I/O 2025By Nickolas Diaz Published -

Next stop is...Londoners can join the Google Pay 'Tube Challenge' for badges and city loreBy Nickolas Diaz Published

Next stop is...Londoners can join the Google Pay 'Tube Challenge' for badges and city loreBy Nickolas Diaz Published

-

Google Play Store

-

-

It's a problemGoogle puts apps that'll drain your battery on blast in updated Play Store listingsBy Nickolas Diaz Published

It's a problemGoogle puts apps that'll drain your battery on blast in updated Play Store listingsBy Nickolas Diaz Published -

A downgrade to downgradingGoogle just made uninstalling system app updates more complicatedBy Sanuj Bhatia Published

A downgrade to downgradingGoogle just made uninstalling system app updates more complicatedBy Sanuj Bhatia Published -

Free cashHere's when Google Play Store users will get an automatic cash settlement payoutBy Brady Snyder Published

Free cashHere's when Google Play Store users will get an automatic cash settlement payoutBy Brady Snyder Published -

You win!Focus Friend and Pokémon TCG Pocket shine in Google Play's Best of 2025 awardsBy Nickolas Diaz Published

You win!Focus Friend and Pokémon TCG Pocket shine in Google Play's Best of 2025 awardsBy Nickolas Diaz Published -

Find it fasterGoogle Play enhances search with new 'Where to watch' streaming featureBy Sanuj Bhatia Published

Find it fasterGoogle Play enhances search with new 'Where to watch' streaming featureBy Sanuj Bhatia Published -

No more siftingGoogle's upcoming review search feature might soon help you save time on the Play StoreBy Jay Bonggolto Published

No more siftingGoogle's upcoming review search feature might soon help you save time on the Play StoreBy Jay Bonggolto Published -

Gift cards go greenYou can now send Starbucks and Disney gift cards straight from Google PlayBy Jay Bonggolto Published

Gift cards go greenYou can now send Starbucks and Disney gift cards straight from Google PlayBy Jay Bonggolto Published -

Epic v. GoogleGoogle and Epic's settlement proposal could finally end the multi-year Play Store disputeBy Brady Snyder Published

Epic v. GoogleGoogle and Epic's settlement proposal could finally end the multi-year Play Store disputeBy Brady Snyder Published -

Ditch the scrollPlay Store’s new AI summaries could help you spot the best apps fasterBy Jay Bonggolto Published

Ditch the scrollPlay Store’s new AI summaries could help you spot the best apps fasterBy Jay Bonggolto Published

-

Meta

-

-

AI AI AI AI AI AI AIMeta's Q1 2026 earnings are in, and it looks like Zuckerberg's blank check for AI spending is raising some eyebrowsBy Nicholas Sutrich Published

AI AI AI AI AI AI AIMeta's Q1 2026 earnings are in, and it looks like Zuckerberg's blank check for AI spending is raising some eyebrowsBy Nicholas Sutrich Published -

new oversightMeta gives parents a way to see what their teens are asking its AIBy Jay Bonggolto Published

new oversightMeta gives parents a way to see what their teens are asking its AIBy Jay Bonggolto Published -

Three-point beast?Meta's Threads is going live with chats for big moments, like the NBA FinalsBy Nickolas Diaz Published

Three-point beast?Meta's Threads is going live with chats for big moments, like the NBA FinalsBy Nickolas Diaz Published -

The Meta Quest 3 is about to be hit with a major price hike — grab the VR headset before it's too lateBy Patrick Farmer Published

The Meta Quest 3 is about to be hit with a major price hike — grab the VR headset before it's too lateBy Patrick Farmer Published -

Visual overhaulWhatsApp Web themes might soon come to save us from boring gray bubblesBy Jay Bonggolto Published

Visual overhaulWhatsApp Web themes might soon come to save us from boring gray bubblesBy Jay Bonggolto Published -

Quick EditFirst DMs, now replies: Instagram lets you edit pesky typos out of your commentsBy Nickolas Diaz Published

Quick EditFirst DMs, now replies: Instagram lets you edit pesky typos out of your commentsBy Nickolas Diaz Published -

For the peopleMeta's new LLM, Muse Spark, wants to take its AI into a 'people first' eraBy Nickolas Diaz Published

For the peopleMeta's new LLM, Muse Spark, wants to take its AI into a 'people first' eraBy Nickolas Diaz Published -

Playing catch-upWhatsApp’s big update fixes the three things users have been yelling about for yearsBy Jay Bonggolto Published

Playing catch-upWhatsApp’s big update fixes the three things users have been yelling about for yearsBy Jay Bonggolto Published -

The RulingJury finds Meta, YouTube 'negligent' in trial over addictive social mediaBy Nickolas Diaz Published

The RulingJury finds Meta, YouTube 'negligent' in trial over addictive social mediaBy Nickolas Diaz Published

-

Spotify

-

-

Spotify drives engagement the right way, expands into 'Fitness' with PelotonBy Nickolas Diaz Published

Spotify drives engagement the right way, expands into 'Fitness' with PelotonBy Nickolas Diaz Published -

A worthy upgradeSpotify looks brand new on tablets with a design rework that makes total senseBy Nickolas Diaz Published

A worthy upgradeSpotify looks brand new on tablets with a design rework that makes total senseBy Nickolas Diaz Published -

Reading is goodSpotify, Bookshop expand to US, and 'Page Match' gets huge language supportBy Nickolas Diaz Published

Reading is goodSpotify, Bookshop expand to US, and 'Page Match' gets huge language supportBy Nickolas Diaz Published -

Podcasts this timePodcasts meet Spotify's Prompted Playlists for curious Premium US beta testersBy Nickolas Diaz Published

Podcasts this timePodcasts meet Spotify's Prompted Playlists for curious Premium US beta testersBy Nickolas Diaz Published -

A vast webIt's in the SongDNA: an 'immersive' Spotify test that lets you discover everyone involvedBy Nickolas Diaz Published

A vast webIt's in the SongDNA: an 'immersive' Spotify test that lets you discover everyone involvedBy Nickolas Diaz Published -

Your tasteAll to your liking: Spotify's 'Taste Profile' beta puts you in charge of the music you findBy Nickolas Diaz Published

Your tasteAll to your liking: Spotify's 'Taste Profile' beta puts you in charge of the music you findBy Nickolas Diaz Published -

InspiredSpotify's 'About the Song' beta lets you into the stories behind the artist's creationBy Nickolas Diaz Published

InspiredSpotify's 'About the Song' beta lets you into the stories behind the artist's creationBy Nickolas Diaz Published -

Turn the pageI'll never stop reading with Spotify, Bookshop's partnership and 'Page Match' on AndroidBy Nickolas Diaz Published

Turn the pageI'll never stop reading with Spotify, Bookshop's partnership and 'Page Match' on AndroidBy Nickolas Diaz Published -

I can show you the...This Spotify update lets us take our lyrics offline, and there's more for users globallyBy Nickolas Diaz Published

I can show you the...This Spotify update lets us take our lyrics offline, and there's more for users globallyBy Nickolas Diaz Published

-

-

-

X is down againX faces major outage as 78K users report disruption this morningBy Nandika Ravi Published

X is down againX faces major outage as 78K users report disruption this morningBy Nandika Ravi Published -

Where are you?X's new 'transparent' location labels for accounts have people questioning everythingBy Nickolas Diaz Published

Where are you?X's new 'transparent' location labels for accounts have people questioning everythingBy Nickolas Diaz Published -

Partial outageFacing trouble logging into X? You're not alone — here’s the scoop!By Nandika Ravi Published

Partial outageFacing trouble logging into X? You're not alone — here’s the scoop!By Nandika Ravi Published -

Twitter is downIt wasn't just you — X (Twitter) resolved a major outage todayBy Brady Snyder Last updated

Twitter is downIt wasn't just you — X (Twitter) resolved a major outage todayBy Brady Snyder Last updated -

Whistleblower calls out Twitter for spambots and mishandling user dataBy Derrek Lee Published

Whistleblower calls out Twitter for spambots and mishandling user dataBy Derrek Lee Published -

What is free speech?By Jerry Hildenbrand Published

What is free speech?By Jerry Hildenbrand Published -

Twitter makes it easier to search for Communities on the webBy Derrek Lee Published

Twitter makes it easier to search for Communities on the webBy Derrek Lee Published -

Massive Twitter outage ends after about 90 minutesBy Michael L Hicks Published

Massive Twitter outage ends after about 90 minutesBy Michael L Hicks Published -

House committee summons Meta, Alphabet, Twitter and Reddit over Capitol riotBy Jay Bonggolto Published

House committee summons Meta, Alphabet, Twitter and Reddit over Capitol riotBy Jay Bonggolto Published

-

Wear OS

-

-

About timeGoogle just announced Wear OS 6.1, and it adds a time zone feature I've wanted for yearsBy Sanuj Bhatia Published

About timeGoogle just announced Wear OS 6.1, and it adds a time zone feature I've wanted for yearsBy Sanuj Bhatia Published -

A proper upgradeSpotify on Wear OS just got a big redesign that makes it much easier to useBy Sanuj Bhatia Published

A proper upgradeSpotify on Wear OS just got a big redesign that makes it much easier to useBy Sanuj Bhatia Published -

Standalone protectionWear OS can now send life-saving earthquake alerts without your phoneBy Sanuj Bhatia Published

Standalone protectionWear OS can now send life-saving earthquake alerts without your phoneBy Sanuj Bhatia Published -

Wear thisThe best Wear OS watchBy Michael L Hicks Last updated

Wear thisThe best Wear OS watchBy Michael L Hicks Last updated -

Visual messA major Wear OS 6 bug is ruining custom watch faces on Pixel and Galaxy WatchesBy Jay Bonggolto Published

Visual messA major Wear OS 6 bug is ruining custom watch faces on Pixel and Galaxy WatchesBy Jay Bonggolto Published -

Wearables WeeklyWhat I expect and want to see from Android smartwatches in 2026By Michael L Hicks Published

Wearables WeeklyWhat I expect and want to see from Android smartwatches in 2026By Michael L Hicks Published -

Wearables WeeklyWear OS in 2025: How Pixel, Galaxy, and OnePlus smartwatches fared against our expectationsBy Michael L Hicks Published

Wearables WeeklyWear OS in 2025: How Pixel, Galaxy, and OnePlus smartwatches fared against our expectationsBy Michael L Hicks Published -

You're green!Androidify for Wear OS: turn yourself into an Android bot for your Pixel WatchBy Nickolas Diaz Published

You're green!Androidify for Wear OS: turn yourself into an Android bot for your Pixel WatchBy Nickolas Diaz Published -

Google Weather is downGoogle Weather is broken on older Wear OS watches, but a fix is comingBy Jay Bonggolto Published

Google Weather is downGoogle Weather is broken on older Wear OS watches, but a fix is comingBy Jay Bonggolto Published

-

Youtube

-

-

The Premium pressureYouTube on mobile makes livestream ads way less annoying, but there's a caveatBy Jay Bonggolto Published

The Premium pressureYouTube on mobile makes livestream ads way less annoying, but there's a caveatBy Jay Bonggolto Published -

Half off YouTubeYou can now get YouTube Premium for half price with Google AI ProBy Sanuj Bhatia Published

Half off YouTubeYou can now get YouTube Premium for half price with Google AI ProBy Sanuj Bhatia Published -

Shorts, gone (almost)YouTube now lets you turn off ShortsBy Sanuj Bhatia Published

Shorts, gone (almost)YouTube now lets you turn off ShortsBy Sanuj Bhatia Published -

Premium gets pricierYouTube Premium just got a price hike, and it's not a small oneBy Sanuj Bhatia Published

Premium gets pricierYouTube Premium just got a price hike, and it's not a small oneBy Sanuj Bhatia Published -

Trying something newYouTube tests a couple of speedy, 'on-the-go' features for busy Android viewersBy Nickolas Diaz Published

Trying something newYouTube tests a couple of speedy, 'on-the-go' features for busy Android viewersBy Nickolas Diaz Published -

Sort of supportedYouTube now works with Android Auto, but not in the way you'd expectBy Sanuj Bhatia Published

Sort of supportedYouTube now works with Android Auto, but not in the way you'd expectBy Sanuj Bhatia Published -

Check it outDiscovering YouTube videos with 'Previews' is a change it wants to see if you likeBy Nickolas Diaz Published

Check it outDiscovering YouTube videos with 'Previews' is a change it wants to see if you likeBy Nickolas Diaz Published -

Take this, but make it thisA twist like no other: YouTube Shorts lets you 'Reimagine' with Gemini and VeoBy Nickolas Diaz Published

Take this, but make it thisA twist like no other: YouTube Shorts lets you 'Reimagine' with Gemini and VeoBy Nickolas Diaz Published -

This might be itYouTube just approved 30-second unskippable ads for TVBy Sanuj Bhatia Published

This might be itYouTube just approved 30-second unskippable ads for TVBy Sanuj Bhatia Published

-

More about Apps & Software

-

-

You like ads?Google flips the script, reportedly considers putting ads in Gemini appBy Nickolas Diaz Published

You like ads?Google flips the script, reportedly considers putting ads in Gemini appBy Nickolas Diaz Published -

Your phone, your rulesGoogle AI is creeping into every app on your phone. Here is how to disable itBy Sanuj Bhatia Published

Your phone, your rulesGoogle AI is creeping into every app on your phone. Here is how to disable itBy Sanuj Bhatia Published -

Gemini takes the wheelGoogle is upgrading in-car Assistant to Gemini, and it’s more than just a refreshBy Jay Bonggolto Published

Gemini takes the wheelGoogle is upgrading in-car Assistant to Gemini, and it’s more than just a refreshBy Jay Bonggolto Published

-