Apps & Software

Latest about Apps & Software

-

-

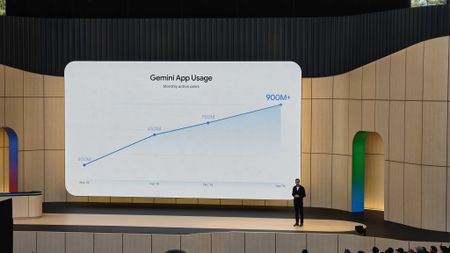

Gemini takeover5 important Gemini updates from Google I/O that could genuinely save you timeBy Sanuj Bhatia Published

Gemini takeover5 important Gemini updates from Google I/O that could genuinely save you timeBy Sanuj Bhatia Published -

Upgrades across the boardGoogle’s new $100 AI plan wants to turn Gemini into a full productivity machineBy Jay Bonggolto Published

Upgrades across the boardGoogle’s new $100 AI plan wants to turn Gemini into a full productivity machineBy Jay Bonggolto Published -

Speed plus smartsGoogle thinks Gemini 3.5 Flash can finally make AI agents more usefulBy Jay Bonggolto Published

Speed plus smartsGoogle thinks Gemini 3.5 Flash can finally make AI agents more usefulBy Jay Bonggolto Published -

Let AI handle itGoogle's new Gemini features will take all the annoying busywork off your plateBy Sanuj Bhatia Published

Let AI handle itGoogle's new Gemini features will take all the annoying busywork off your plateBy Sanuj Bhatia Published -

Assistant rebootPlanning a wedding? Google's new Gemini Spark AI agents might actually helpBy Sanuj Bhatia Published

Assistant rebootPlanning a wedding? Google's new Gemini Spark AI agents might actually helpBy Sanuj Bhatia Published -

Conversations replace queriesGoogle is giving Search its biggest overhaul in 25 yearsBy Jay Bonggolto Published

Conversations replace queriesGoogle is giving Search its biggest overhaul in 25 yearsBy Jay Bonggolto Published -

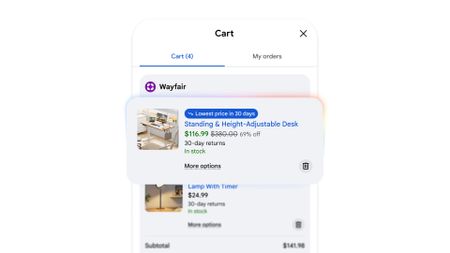

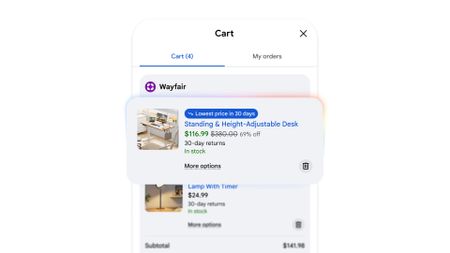

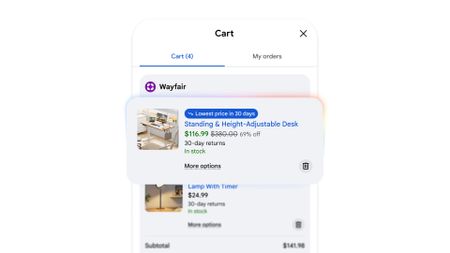

AI x online shoppingForget CamelCamelCamel: Google's new Universal Cart will track prices and find deals for youBy Sanuj Bhatia Published

AI x online shoppingForget CamelCamelCamel: Google's new Universal Cart will track prices and find deals for youBy Sanuj Bhatia Published

-

Explore Apps & Software

AI

-

-

Gemini takeover5 important Gemini updates from Google I/O that could genuinely save you timeBy Sanuj Bhatia Published

Gemini takeover5 important Gemini updates from Google I/O that could genuinely save you timeBy Sanuj Bhatia Published -

Let AI handle itGoogle's new Gemini features will take all the annoying busywork off your plateBy Sanuj Bhatia Published

Let AI handle itGoogle's new Gemini features will take all the annoying busywork off your plateBy Sanuj Bhatia Published -

Assistant rebootPlanning a wedding? Google's new Gemini Spark AI agents might actually helpBy Sanuj Bhatia Published

Assistant rebootPlanning a wedding? Google's new Gemini Spark AI agents might actually helpBy Sanuj Bhatia Published -

Genie "magic"Dreaming of Project Genie? Google I/O unveils 'Street View,' putting imaginary worlds into oursBy Nickolas Diaz Published

Genie "magic"Dreaming of Project Genie? Google I/O unveils 'Street View,' putting imaginary worlds into oursBy Nickolas Diaz Published -

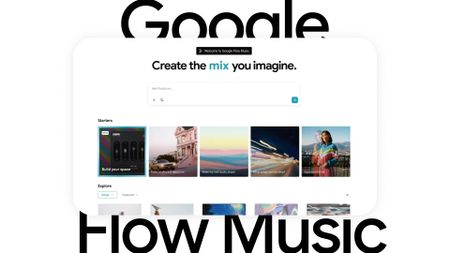

AI crazyGoogle I/O gets into a Flow: preps Flow Music app and generative editing for on-the-goBy Nickolas Diaz Published

AI crazyGoogle I/O gets into a Flow: preps Flow Music app and generative editing for on-the-goBy Nickolas Diaz Published -

AI x online shoppingForget CamelCamelCamel: Google's new Universal Cart will track prices and find deals for youBy Sanuj Bhatia Published

AI x online shoppingForget CamelCamelCamel: Google's new Universal Cart will track prices and find deals for youBy Sanuj Bhatia Published -

Gemini goes furtherNot just an OS: Gemini Intelligence shines with Android automation this summerBy Nickolas Diaz Published

Gemini goes furtherNot just an OS: Gemini Intelligence shines with Android automation this summerBy Nickolas Diaz Published -

No one wanted thisGoogle just gave me the best reason ever to uninstall ChromeBy Nickolas Diaz Published

No one wanted thisGoogle just gave me the best reason ever to uninstall ChromeBy Nickolas Diaz Published -

You like ads?Google flips the script, reportedly considers putting ads in Gemini appBy Nickolas Diaz Published

You like ads?Google flips the script, reportedly considers putting ads in Gemini appBy Nickolas Diaz Published

-

Android Auto

-

-

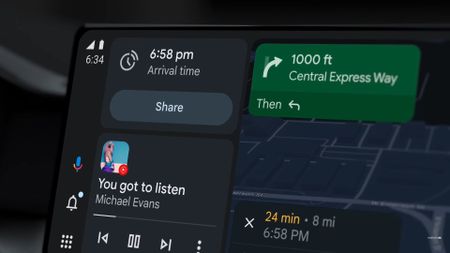

Take a driveAndroid Auto gets a fresh take on Google Maps navigation, teases more Gemini at the Android ShowBy Nickolas Diaz Published

Take a driveAndroid Auto gets a fresh take on Google Maps navigation, teases more Gemini at the Android ShowBy Nickolas Diaz Published -

Smarter driving5 Android Auto settings I always change on any new Android phoneBy Roydon Cerejo Published

Smarter driving5 Android Auto settings I always change on any new Android phoneBy Roydon Cerejo Published -

Android Auto bug is making the signal icon vanish for some usersBy Sanuj Bhatia Published

Android Auto bug is making the signal icon vanish for some usersBy Sanuj Bhatia Published -

Finally fixedAndroid's Driving Mode is finally smarter about when it turns onBy Sanuj Bhatia Published

Finally fixedAndroid's Driving Mode is finally smarter about when it turns onBy Sanuj Bhatia Published -

Where'd it go?Something's missing: Android Auto users report a jarring bug in Google MapsBy Nickolas Diaz Published

Where'd it go?Something's missing: Android Auto users report a jarring bug in Google MapsBy Nickolas Diaz Published -

It might finally happenAndroid Auto may finally let you cast media from your phoneBy Sanuj Bhatia Published

It might finally happenAndroid Auto may finally let you cast media from your phoneBy Sanuj Bhatia Published -

Gemini-fiedGemini transforms Android Auto with new AI features for a smarter driveBy Nandika Ravi Published

Gemini-fiedGemini transforms Android Auto with new AI features for a smarter driveBy Nandika Ravi Published -

Bye, Assistant!Gemini for Android Auto is starting to replace Google AssistantBy Brady Snyder Published

Bye, Assistant!Gemini for Android Auto is starting to replace Google AssistantBy Brady Snyder Published -

Eyes on the RoadGoogle starts quietly rolling out an essential button on Android AutoBy Nickolas Diaz Published

Eyes on the RoadGoogle starts quietly rolling out an essential button on Android AutoBy Nickolas Diaz Published

-

Android OS

-

-

Emojis leakHere's your first look at more of Google's new 3D emojis for Android 17By Sanuj Bhatia Published

Emojis leakHere's your first look at more of Google's new 3D emojis for Android 17By Sanuj Bhatia Published -

Sharing gets easierQuick Share is getting a useful upgrade for sharing files with iPhonesBy Sanuj Bhatia Published

Sharing gets easierQuick Share is getting a useful upgrade for sharing files with iPhonesBy Sanuj Bhatia Published -

What's nextAndroid 17: Everything you need to knowBy Harish Jonnalagadda Last updated

What's nextAndroid 17: Everything you need to knowBy Harish Jonnalagadda Last updated -

Big Android reveals5 huge Android 17 upgrades are coming this year — Here are the best new features announced at The Android ShowBy Sanuj Bhatia Published

Big Android reveals5 huge Android 17 upgrades are coming this year — Here are the best new features announced at The Android ShowBy Sanuj Bhatia Published -

Android gets personalAndroid 17 is fixing two things that have annoyed me for yearsBy Sanuj Bhatia Published

Android gets personalAndroid 17 is fixing two things that have annoyed me for yearsBy Sanuj Bhatia Published -

Android gets creativeGoogle is finally treating Android creators seriously with Android 17By Sanuj Bhatia Published

Android gets creativeGoogle is finally treating Android creators seriously with Android 17By Sanuj Bhatia Published -

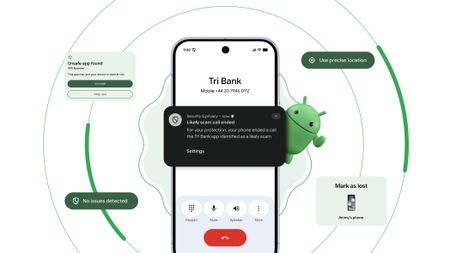

Stay safeYour Android security and privacy got huge upgrades—The Android Show reveals allBy Nickolas Diaz Published

Stay safeYour Android security and privacy got huge upgrades—The Android Show reveals allBy Nickolas Diaz Published -

Magic PointerI didn't think the desktop cursor needed reinventing — Googlebooks are proving me wrongBy Brady Snyder Published

Magic PointerI didn't think the desktop cursor needed reinventing — Googlebooks are proving me wrongBy Brady Snyder Published -

Spring cleaningIt's time to do some spring cleaning with the Files by Google app to free up storage on your Android phoneBy Brady Snyder Last updated

Spring cleaningIt's time to do some spring cleaning with the Files by Google app to free up storage on your Android phoneBy Brady Snyder Last updated

-

Gmail

-

-

A fresh coat of paintGoogle's new gradient icons for Gmail, Calendar, Drive and more are finally rolling outBy Sanuj Bhatia Published

A fresh coat of paintGoogle's new gradient icons for Gmail, Calendar, Drive and more are finally rolling outBy Sanuj Bhatia Published -

That's a big cutGoogle may be cutting free Gmail storage for new accounts down to 5GBBy Sanuj Bhatia Published

That's a big cutGoogle may be cutting free Gmail storage for new accounts down to 5GBBy Sanuj Bhatia Published -

Useful parroting?Gmail's 'Help me write' can now mimic how you speak to create emails for youBy Nickolas Diaz Published

Useful parroting?Gmail's 'Help me write' can now mimic how you speak to create emails for youBy Nickolas Diaz Published -

New address, same inboxI changed my embarrassing Gmail username without losing anything, and you can tooBy Sanuj Bhatia Published

New address, same inboxI changed my embarrassing Gmail username without losing anything, and you can tooBy Sanuj Bhatia Published -

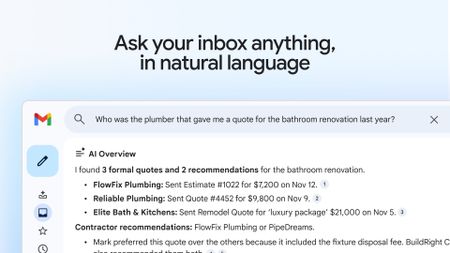

That's a steep priceGmail's new AI Inbox is here, but it'll cost you $250 a monthBy Sanuj Bhatia Published

That's a steep priceGmail's new AI Inbox is here, but it'll cost you $250 a monthBy Sanuj Bhatia Published -

Gemini takes overGmail is getting a new AI inbox as Google brings Gemini front and centerBy Sanuj Bhatia Published

Gemini takes overGmail is getting a new AI inbox as Google brings Gemini front and centerBy Sanuj Bhatia Published -

A long-awaited featureGmail might finally let you switch to a new address without starting overBy Sanuj Bhatia Published

A long-awaited featureGmail might finally let you switch to a new address without starting overBy Sanuj Bhatia Published -

Get a previewGmail gives Android users a window into email attachments with this updateBy Nickolas Diaz Published

Get a previewGmail gives Android users a window into email attachments with this updateBy Nickolas Diaz Published -

Sleigh Bells ring...Google brings a unified 'Purchases' tab to Gmail ahead of the holiday rushBy Nickolas Diaz Published

Sleigh Bells ring...Google brings a unified 'Purchases' tab to Gmail ahead of the holiday rushBy Nickolas Diaz Published

-

Google Assistant

-

-

Bye, AssistantGoogle Assistant could shut down for Android Auto in March 2026By Brady Snyder Published

Bye, AssistantGoogle Assistant could shut down for Android Auto in March 2026By Brady Snyder Published -

New look!Google's song search evolves with a modern Gemini-inspired UI on AndroidBy Nandika Ravi Published

New look!Google's song search evolves with a modern Gemini-inspired UI on AndroidBy Nandika Ravi Published -

New look!Google's voice and song search gets a major overhaul on Android after yearsBy Nandika Ravi Published

New look!Google's voice and song search gets a major overhaul on Android after yearsBy Nandika Ravi Published -

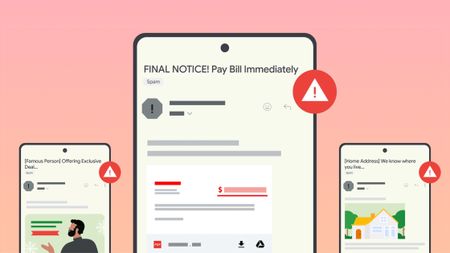

Stay in the knowGoogle introduces new tools to help users fight against evolving phishing scams effectivelyBy Nandika Ravi Published

Stay in the knowGoogle introduces new tools to help users fight against evolving phishing scams effectivelyBy Nandika Ravi Published -

Google OutageGoogle, Gmail, and Meet hit by widespread outage, causing login issuesBy Nandika Ravi Published

Google OutageGoogle, Gmail, and Meet hit by widespread outage, causing login issuesBy Nandika Ravi Published -

New voicesGoogle is spicing up its voice list on Search, according to a new leakBy Nandika Ravi Published

New voicesGoogle is spicing up its voice list on Search, according to a new leakBy Nandika Ravi Published -

New Google AI plansNew Google AI Pro and $249/month Ultra subscription announced at I/OBy Vishnu Sarangapurkar Published

New Google AI plansNew Google AI Pro and $249/month Ultra subscription announced at I/OBy Vishnu Sarangapurkar Published -

Easy-peesyGoogle app on iOS gets a new feature that will 'Simplify' text onlineBy Nandika Ravi Published

Easy-peesyGoogle app on iOS gets a new feature that will 'Simplify' text onlineBy Nandika Ravi Published -

ByeGoogle officially killed Driving Mode after stripping most of its features in 2024By Brady Snyder Published

ByeGoogle officially killed Driving Mode after stripping most of its features in 2024By Brady Snyder Published

-

Google Maps

-

-

Don't stressGet in: Android Auto EVs see AI battery predictions in Google Maps for stress-free plansBy Nickolas Diaz Published

Don't stressGet in: Android Auto EVs see AI battery predictions in Google Maps for stress-free plansBy Nickolas Diaz Published -

It's just like new'Immersive Navigation' in Google Maps is like opening a new app for the modern era of drivingBy Nickolas Diaz Published

It's just like new'Immersive Navigation' in Google Maps is like opening a new app for the modern era of drivingBy Nickolas Diaz Published -

Limiting features?Google Maps might keep things from you if you don't sign in to an accountBy Nickolas Diaz Published

Limiting features?Google Maps might keep things from you if you don't sign in to an accountBy Nickolas Diaz Published -

Test runGoogle Maps might get a trial space for new features, and 'Ask Maps' could headlineBy Nickolas Diaz Published

Test runGoogle Maps might get a trial space for new features, and 'Ask Maps' could headlineBy Nickolas Diaz Published -

Out on a walkMy walks just got a lot better, as Google says 'Gemini in Navigation' supports moreBy Nickolas Diaz Published

Out on a walkMy walks just got a lot better, as Google says 'Gemini in Navigation' supports moreBy Nickolas Diaz Published -

Better battery lifeHow to enable and use Google Maps power saving modeBy Brady Snyder Published

Better battery lifeHow to enable and use Google Maps power saving modeBy Brady Snyder Published -

Let's go thereGoogle Maps gets a major upgrade with Gemini for smooth navigation on Android and iOSBy Nickolas Diaz Published

Let's go thereGoogle Maps gets a major upgrade with Gemini for smooth navigation on Android and iOSBy Nickolas Diaz Published -

Let's go thereGoogle Maps gets a Gemini boost to help you navigate the roads like a proBy Nickolas Diaz Published

Let's go thereGoogle Maps gets a Gemini boost to help you navigate the roads like a proBy Nickolas Diaz Published -

Double RainbowHere's what the redesigned Google Photos and Maps icons look likeBy Nickolas Diaz Published

Double RainbowHere's what the redesigned Google Photos and Maps icons look likeBy Nickolas Diaz Published

-

Google Pay

-

-

How ToThis is the best Google Pay feature you're not using in IndiaBy Harish Jonnalagadda Published

How ToThis is the best Google Pay feature you're not using in IndiaBy Harish Jonnalagadda Published -

How ToI used this hidden Google Pay feature to automate credit card bill paymentsBy Harish Jonnalagadda Published

How ToI used this hidden Google Pay feature to automate credit card bill paymentsBy Harish Jonnalagadda Published -

No more drainAndroid’s next update is finally addressing your phone’s biggest battery hogsBy Jay Bonggolto Published

No more drainAndroid’s next update is finally addressing your phone’s biggest battery hogsBy Jay Bonggolto Published -

On TimeGoogle Wallet is helping Android users effortlessly catch their plane or trainBy Nickolas Diaz Published

On TimeGoogle Wallet is helping Android users effortlessly catch their plane or trainBy Nickolas Diaz Published -

Quick TapsGoogle Pay's fresh updates will unlock better shopping rewards for Chrome usersBy Nickolas Diaz Published

Quick TapsGoogle Pay's fresh updates will unlock better shopping rewards for Chrome usersBy Nickolas Diaz Published -

Pay Your WayAndroid users get another option to pay later with Klarna on Google PayBy Nickolas Diaz Published

Pay Your WayAndroid users get another option to pay later with Klarna on Google PayBy Nickolas Diaz Published -

Easier accessGoogle Wallet brings digital ID support to UK, more US statesBy Nandika Ravi Published

Easier accessGoogle Wallet brings digital ID support to UK, more US statesBy Nandika Ravi Published -

Now arriving at...Google Wallet brings real-time train status alerts to Android, and teases I/O 2025By Nickolas Diaz Published

Now arriving at...Google Wallet brings real-time train status alerts to Android, and teases I/O 2025By Nickolas Diaz Published -

Next stop is...Londoners can join the Google Pay 'Tube Challenge' for badges and city loreBy Nickolas Diaz Published

Next stop is...Londoners can join the Google Pay 'Tube Challenge' for badges and city loreBy Nickolas Diaz Published

-

Google Play Store

-

-

Play gets smarterGoogle Play is getting a huge AI upgrade with Ask Play and Play ShortsBy Sanuj Bhatia Published

Play gets smarterGoogle Play is getting a huge AI upgrade with Ask Play and Play ShortsBy Sanuj Bhatia Published -

Create appsThis Google AI tool can now build Android apps from text promptsBy Sanuj Bhatia Published

Create appsThis Google AI tool can now build Android apps from text promptsBy Sanuj Bhatia Published -

It's a problemGoogle puts apps that'll drain your battery on blast in updated Play Store listingsBy Nickolas Diaz Published

It's a problemGoogle puts apps that'll drain your battery on blast in updated Play Store listingsBy Nickolas Diaz Published -

A downgrade to downgradingGoogle just made uninstalling system app updates more complicatedBy Sanuj Bhatia Published

A downgrade to downgradingGoogle just made uninstalling system app updates more complicatedBy Sanuj Bhatia Published -

Free cashHere's when Google Play Store users will get an automatic cash settlement payoutBy Brady Snyder Published

Free cashHere's when Google Play Store users will get an automatic cash settlement payoutBy Brady Snyder Published -

You win!Focus Friend and Pokémon TCG Pocket shine in Google Play's Best of 2025 awardsBy Nickolas Diaz Published

You win!Focus Friend and Pokémon TCG Pocket shine in Google Play's Best of 2025 awardsBy Nickolas Diaz Published -

Find it fasterGoogle Play enhances search with new 'Where to watch' streaming featureBy Sanuj Bhatia Published

Find it fasterGoogle Play enhances search with new 'Where to watch' streaming featureBy Sanuj Bhatia Published -

No more siftingGoogle's upcoming review search feature might soon help you save time on the Play StoreBy Jay Bonggolto Published

No more siftingGoogle's upcoming review search feature might soon help you save time on the Play StoreBy Jay Bonggolto Published -

Gift cards go greenYou can now send Starbucks and Disney gift cards straight from Google PlayBy Jay Bonggolto Published

Gift cards go greenYou can now send Starbucks and Disney gift cards straight from Google PlayBy Jay Bonggolto Published

-

Meta

-

-

AI everywhereMeta's Muse Spark arrives on AI Glasses Gen 1, Ray-Ban Display waits for nowBy Nickolas Diaz Published

AI everywhereMeta's Muse Spark arrives on AI Glasses Gen 1, Ray-Ban Display waits for nowBy Nickolas Diaz Published -

AI, VR, AI, VRMeta Connect 2026 confirmed for September, and we're thinking AI and QuestBy Nickolas Diaz Published

AI, VR, AI, VRMeta Connect 2026 confirmed for September, and we're thinking AI and QuestBy Nickolas Diaz Published -

Per your requestMeta's AI plans look agentic with a potential Instagram bot that shops for youBy Nickolas Diaz Published

Per your requestMeta's AI plans look agentic with a potential Instagram bot that shops for youBy Nickolas Diaz Published -

Encryption is goneMeta can see your Instagram messages now, and it's time to stop using itBy Sanuj Bhatia Published

Encryption is goneMeta can see your Instagram messages now, and it's time to stop using itBy Sanuj Bhatia Published -

AI AI AI AI AI AI AIMeta's Q1 2026 earnings are in, and it looks like Zuckerberg's blank check for AI spending is raising some eyebrowsBy Nicholas Sutrich Published

AI AI AI AI AI AI AIMeta's Q1 2026 earnings are in, and it looks like Zuckerberg's blank check for AI spending is raising some eyebrowsBy Nicholas Sutrich Published -

new oversightMeta gives parents a way to see what their teens are asking its AIBy Jay Bonggolto Published

new oversightMeta gives parents a way to see what their teens are asking its AIBy Jay Bonggolto Published -

Three-point beast?Meta's Threads is going live with chats for big moments, like the NBA FinalsBy Nickolas Diaz Published

Three-point beast?Meta's Threads is going live with chats for big moments, like the NBA FinalsBy Nickolas Diaz Published -

The Meta Quest 3 is about to be hit with a major price hike — grab the VR headset before it's too lateBy Patrick Farmer Published

The Meta Quest 3 is about to be hit with a major price hike — grab the VR headset before it's too lateBy Patrick Farmer Published -

Visual overhaulWhatsApp Web themes might soon come to save us from boring gray bubblesBy Jay Bonggolto Published

Visual overhaulWhatsApp Web themes might soon come to save us from boring gray bubblesBy Jay Bonggolto Published

-

Spotify

-

-

Spotify drives engagement the right way, expands into 'Fitness' with PelotonBy Nickolas Diaz Published

Spotify drives engagement the right way, expands into 'Fitness' with PelotonBy Nickolas Diaz Published -

A worthy upgradeSpotify looks brand new on tablets with a design rework that makes total senseBy Nickolas Diaz Published

A worthy upgradeSpotify looks brand new on tablets with a design rework that makes total senseBy Nickolas Diaz Published -

Reading is goodSpotify, Bookshop expand to US, and 'Page Match' gets huge language supportBy Nickolas Diaz Published

Reading is goodSpotify, Bookshop expand to US, and 'Page Match' gets huge language supportBy Nickolas Diaz Published -

Podcasts this timePodcasts meet Spotify's Prompted Playlists for curious Premium US beta testersBy Nickolas Diaz Published

Podcasts this timePodcasts meet Spotify's Prompted Playlists for curious Premium US beta testersBy Nickolas Diaz Published -

A vast webIt's in the SongDNA: an 'immersive' Spotify test that lets you discover everyone involvedBy Nickolas Diaz Published

A vast webIt's in the SongDNA: an 'immersive' Spotify test that lets you discover everyone involvedBy Nickolas Diaz Published -

Your tasteAll to your liking: Spotify's 'Taste Profile' beta puts you in charge of the music you findBy Nickolas Diaz Published

Your tasteAll to your liking: Spotify's 'Taste Profile' beta puts you in charge of the music you findBy Nickolas Diaz Published -

InspiredSpotify's 'About the Song' beta lets you into the stories behind the artist's creationBy Nickolas Diaz Published

InspiredSpotify's 'About the Song' beta lets you into the stories behind the artist's creationBy Nickolas Diaz Published -

Turn the pageI'll never stop reading with Spotify, Bookshop's partnership and 'Page Match' on AndroidBy Nickolas Diaz Published

Turn the pageI'll never stop reading with Spotify, Bookshop's partnership and 'Page Match' on AndroidBy Nickolas Diaz Published -

I can show you the...This Spotify update lets us take our lyrics offline, and there's more for users globallyBy Nickolas Diaz Published

I can show you the...This Spotify update lets us take our lyrics offline, and there's more for users globallyBy Nickolas Diaz Published

-

-

-

X is down againX faces major outage as 78K users report disruption this morningBy Nandika Ravi Published

X is down againX faces major outage as 78K users report disruption this morningBy Nandika Ravi Published -

Where are you?X's new 'transparent' location labels for accounts have people questioning everythingBy Nickolas Diaz Published

Where are you?X's new 'transparent' location labels for accounts have people questioning everythingBy Nickolas Diaz Published -

Partial outageFacing trouble logging into X? You're not alone — here’s the scoop!By Nandika Ravi Published

Partial outageFacing trouble logging into X? You're not alone — here’s the scoop!By Nandika Ravi Published -

Twitter is downIt wasn't just you — X (Twitter) resolved a major outage todayBy Brady Snyder Last updated

Twitter is downIt wasn't just you — X (Twitter) resolved a major outage todayBy Brady Snyder Last updated -

Whistleblower calls out Twitter for spambots and mishandling user dataBy Derrek Lee Published

Whistleblower calls out Twitter for spambots and mishandling user dataBy Derrek Lee Published -

What is free speech?By Jerry Hildenbrand Published

What is free speech?By Jerry Hildenbrand Published -

Twitter makes it easier to search for Communities on the webBy Derrek Lee Published

Twitter makes it easier to search for Communities on the webBy Derrek Lee Published -

Massive Twitter outage ends after about 90 minutesBy Michael L Hicks Published

Massive Twitter outage ends after about 90 minutesBy Michael L Hicks Published -

House committee summons Meta, Alphabet, Twitter and Reddit over Capitol riotBy Jay Bonggolto Published

House committee summons Meta, Alphabet, Twitter and Reddit over Capitol riotBy Jay Bonggolto Published

-

Wear OS

-

-

About timeGoogle just announced Wear OS 6.1, and it adds a time zone feature I've wanted for yearsBy Sanuj Bhatia Published

About timeGoogle just announced Wear OS 6.1, and it adds a time zone feature I've wanted for yearsBy Sanuj Bhatia Published -

A proper upgradeSpotify on Wear OS just got a big redesign that makes it much easier to useBy Sanuj Bhatia Published

A proper upgradeSpotify on Wear OS just got a big redesign that makes it much easier to useBy Sanuj Bhatia Published -

Standalone protectionWear OS can now send life-saving earthquake alerts without your phoneBy Sanuj Bhatia Published

Standalone protectionWear OS can now send life-saving earthquake alerts without your phoneBy Sanuj Bhatia Published -

Wear thisThe best Wear OS watchBy Michael L Hicks Last updated

Wear thisThe best Wear OS watchBy Michael L Hicks Last updated -

Visual messA major Wear OS 6 bug is ruining custom watch faces on Pixel and Galaxy WatchesBy Jay Bonggolto Published

Visual messA major Wear OS 6 bug is ruining custom watch faces on Pixel and Galaxy WatchesBy Jay Bonggolto Published -

Wearables WeeklyWhat I expect and want to see from Android smartwatches in 2026By Michael L Hicks Published

Wearables WeeklyWhat I expect and want to see from Android smartwatches in 2026By Michael L Hicks Published -

Wearables WeeklyWear OS in 2025: How Pixel, Galaxy, and OnePlus smartwatches fared against our expectationsBy Michael L Hicks Published

Wearables WeeklyWear OS in 2025: How Pixel, Galaxy, and OnePlus smartwatches fared against our expectationsBy Michael L Hicks Published -

You're green!Androidify for Wear OS: turn yourself into an Android bot for your Pixel WatchBy Nickolas Diaz Published

You're green!Androidify for Wear OS: turn yourself into an Android bot for your Pixel WatchBy Nickolas Diaz Published -

Google Weather is downGoogle Weather is broken on older Wear OS watches, but a fix is comingBy Jay Bonggolto Published

Google Weather is downGoogle Weather is broken on older Wear OS watches, but a fix is comingBy Jay Bonggolto Published

-

Youtube

-

-

More AI slopGoogle's new YouTube AI tools could make AI slop impossible to escapeBy Sanuj Bhatia Published

More AI slopGoogle's new YouTube AI tools could make AI slop impossible to escapeBy Sanuj Bhatia Published -

The Premium pressureYouTube on mobile makes livestream ads way less annoying, but there's a caveatBy Jay Bonggolto Published

The Premium pressureYouTube on mobile makes livestream ads way less annoying, but there's a caveatBy Jay Bonggolto Published -

Half off YouTubeYou can now get YouTube Premium for half price with Google AI ProBy Sanuj Bhatia Published

Half off YouTubeYou can now get YouTube Premium for half price with Google AI ProBy Sanuj Bhatia Published -

Shorts, gone (almost)YouTube now lets you turn off ShortsBy Sanuj Bhatia Published

Shorts, gone (almost)YouTube now lets you turn off ShortsBy Sanuj Bhatia Published -

Premium gets pricierYouTube Premium just got a price hike, and it's not a small oneBy Sanuj Bhatia Published

Premium gets pricierYouTube Premium just got a price hike, and it's not a small oneBy Sanuj Bhatia Published -

Trying something newYouTube tests a couple of speedy, 'on-the-go' features for busy Android viewersBy Nickolas Diaz Published

Trying something newYouTube tests a couple of speedy, 'on-the-go' features for busy Android viewersBy Nickolas Diaz Published -

Sort of supportedYouTube now works with Android Auto, but not in the way you'd expectBy Sanuj Bhatia Published

Sort of supportedYouTube now works with Android Auto, but not in the way you'd expectBy Sanuj Bhatia Published -

Check it outDiscovering YouTube videos with 'Previews' is a change it wants to see if you likeBy Nickolas Diaz Published

Check it outDiscovering YouTube videos with 'Previews' is a change it wants to see if you likeBy Nickolas Diaz Published -

Take this, but make it thisA twist like no other: YouTube Shorts lets you 'Reimagine' with Gemini and VeoBy Nickolas Diaz Published

Take this, but make it thisA twist like no other: YouTube Shorts lets you 'Reimagine' with Gemini and VeoBy Nickolas Diaz Published

-

More about Apps & Software

-

-

AI x online shoppingForget CamelCamelCamel: Google's new Universal Cart will track prices and find deals for youBy Sanuj Bhatia Published

AI x online shoppingForget CamelCamelCamel: Google's new Universal Cart will track prices and find deals for youBy Sanuj Bhatia Published -

Play gets smarterGoogle Play is getting a huge AI upgrade with Ask Play and Play ShortsBy Sanuj Bhatia Published

Play gets smarterGoogle Play is getting a huge AI upgrade with Ask Play and Play ShortsBy Sanuj Bhatia Published -

Create appsThis Google AI tool can now build Android apps from text promptsBy Sanuj Bhatia Published

Create appsThis Google AI tool can now build Android apps from text promptsBy Sanuj Bhatia Published

-