How does Google's Real Tone camera feature work?

Best answer: The same machine learning that helps improve portrait photos and power Magic Eraser also makes the Pixel 6 camera more inclusive for all skin tones through its Real Tone feature.

What is Real Tone?

Google developed its Real Tone camera tech with a mission to make its camera and image products more equitable when photographing people of color, especially for those with darker skin tones. Historically, a lack of diverse testing meant that today's cameras weren't built to accurately portray all skin tones in their natural beauty. Unfortunately, this also means that smartphones continue to carry that bias, ultimately delivering unflattering photos for people of color. But it's not just smartphone cameras that struggle here. We've seen all sorts of camera-enabled tech issues when it comes to people who aren't light-skinned.

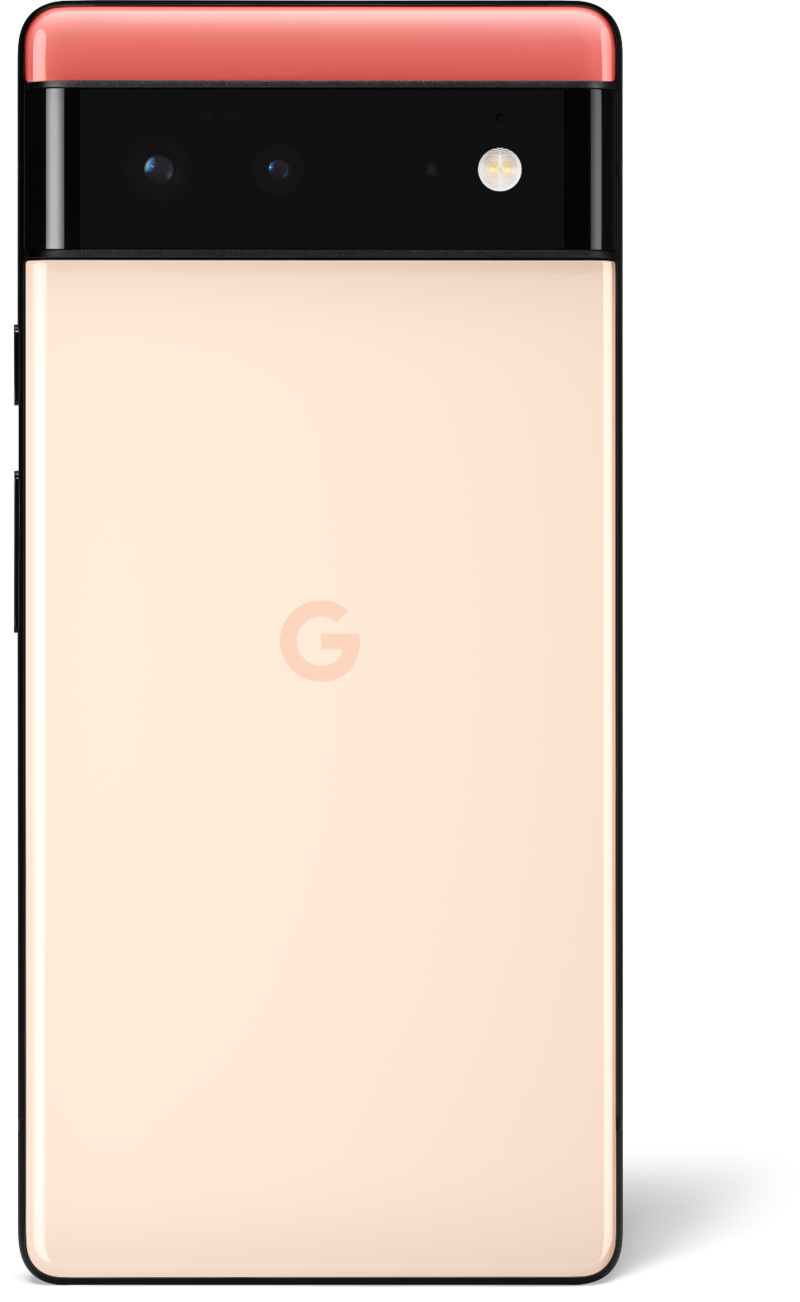

So with the launch of its Pixel 6, Google is trying to tackle this issue using Real Tone. With this feature, the camera hardware and software work in tandem to match the actual tone of the people in frame. And it works surprisingly well, especially for a first-generation product.

How it works isn't that simple to explain without sounding like there's a good bit of magic at play. But really, it's no different than any other aspect of computational photography that powers the best Android camera phones. It just needed a company to spend the time and money to work on it.

When you tap the shutter button in the camera app on your phone, the work of building a great photograph has already started. Camera lenses and sensors aren't particularly smart, but they're very good at their intended purposes: collecting and focusing light. Using multiple captures and electronic filters, sensor data is collected at very specific points of light in colors red, blue, and green.

That data isn't a photo, though. It's just data. It takes some processing to turn millions of individual points of light into an image, and that's where Google has always been really good at the game. Using machine learning and AI, the camera stream can look for shapes and contrasts to build a face, differentiate between the subject and the background, and more. One of the very important things that need to happen to camera sensor data is to make the colors in the finished photograph look the same as what a human eye can see.

Get the latest news from Android Central, your trusted companion in the world of Android

That's where Real Tone shines. The camera software was refined to evaluate the skin tones it sees and expands on the number of different shades it thinks a person can be. Think about the people you know and how different the color of their skin can be from each other or from yours. Humans aren't just beige or brown, and camera software shouldn't act like they are. If you're a white person like I am, you probably haven't ever had an issue with your skin color in photos. But a lot of people always have.

This wasn't an easy undertaking — but it was a necessary one. Over-brightening darker skin or applying less contrast just doesn't work, and we have billions of photos that prove it. Something like Google's Real Tone project is long overdue.

Finally, a proper Pixel flagship has arrived.

After years of fatal flaws and stagnant design, Google has finally given us the shock-and-awe upgrade we've been waiting for. Tensor's AI prowess makes most Assistant features feel magically fast. Major camera updates for hardware and processing give us significantly better photos, especially when photographing people of color and moments of action.

Jerry is an amateur woodworker and struggling shade tree mechanic. There's nothing he can't take apart, but many things he can't reassemble. You'll find him writing and speaking his loud opinion on Android Central and occasionally on Threads.