Google I/O 2021 preview: Here's what to expect from the return of Google's annual developer conference

After canceling last year's event due to the onset of the COVID-19 pandemic that we're still in the midst of, Google has decided to go ahead this year with its annual developer conference. Google I/O will be a completely free and fully virtual event where the company will show off some of what it's been working on over the past 12 months or so. While that includes a lot of developer talk, there will also be plenty of consumer-facing products shown off as well. The good thing about Google I/O is that while it's a developer conference, regular folk and tech enthusiasts can enjoy it as well and get an insight into the cool things that may soon reach their phones, computers, or smart speakers. You can take a look at some of the top five features that were announced at Google I/O since 2015 to get an idea of the kinds of announcements and revelations that come from this event.

The three-day conference will host several keynotes, technical sessions, workshops, and Ask Me Anything Sessions (AMAs). To partake in the sessions, you have to register on the Google I/O website. The conference is taking place May 18-20, so it's right around the corner, but there's still time to speculate about what we could see.

Android 12

The final Android 12 Developer Preview has been going steady, with a ton of hidden features discovered in the first rollout. We've had a look at new conversation widgets, a gaming toolbar, an updated haptics system, and even some form of a native theming system, with visual changes all around. Of course, there are also plenty of under-the-hood updates, as well as better privacy features.

It's unclear which of these features will make it to the final version of Android 12, but Google I/O should give us a much better idea since it comes around the launch of the first public beta. This could also be when we learn which of the best Android phones will be eligible for the beta program, which varies each year depending on the manufacturer.

Google Assistant and Nest Products

One of the biggest announcements in recent years for the Assistant was Google Duplex, which let Google's AI assistant take over a call to make appointments on your behalf. It's unclear if Google has anything quite as impressive as that to show off this year, but we're likely to get many updates on Google Assistant's capabilities. Google has apparently been working on a new Memory feature, which aggregates all of your collections into one place, organizes them, and pulls any relevant contextual information from them. The feature has already been spotted working in some form, so we may get more information on it soon.

Google isn't expected to go all out on Nest product announcements this year, especially given the recent launch of devices like the Nest Audio speaker and Nest Hub (2nd Gen). We may, however, get a look at a new Nest Hello video doorbell, which was reportedly spotted recently in the Google Home app. So far, the only major change we can see besides what might be a taller chassis is a USB-C port on the back instead of an outdated micro-USB.

Apps & Services

You can likely expect Google to announce many updates and new features for its apps and services. Google Pay just received a major overhaul but is still limited to just a few countries, and now that it's shed its beta status, we might see a wider rollout. Google Photos and Maps have also received new features regularly like a new video editor and dark mode. Still, Google could have additional features coming for these apps and more.

Get the latest news from Android Central, your trusted companion in the world of Android

Whitechapel

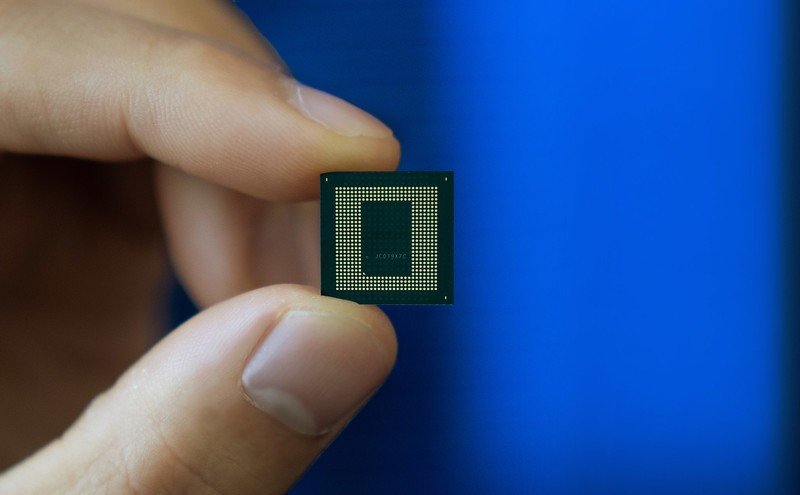

If you haven't heard, Google is reportedly working on its own SoC codenamed "Whitechapel." That means it could stop relying on Qualcomm for its chips in future Pixel smartphones, particularly in the midst of a global chip shortage affecting production across multiple industries. From what we can tell about the SoC, it'll fall short of flagship chips like the Qualcomm Snapdragon 888, but Google will likely lean on Machine Learning to do a lot of the heavy lifting.

This one's a maybe, but it's possible Google could make some announcement about the new chip.

Wear OS

No one's really holding their breath for Google to make any big announcements around Wear OS, but the past year has shown that Google is still committed to the platform in some shape or form. The new Qualcomm Snapdragon Wear 4100 was launched and Google rolled out its H-MR2 update to improve battery life and bring other under-the-hood enhancements. Google also just opened up Wear OS Tiles to third-party developers, which can greatly expand the platform's functionality.

With rumors of a Samsung Galaxy Watch 4 running Wear OS, significant improvements to Wear OS could be something to look out for at this year's Google I/O.

Pixel Buds A

There have been rumors of Google working on a new pair of Google Pixel Buds for 2021. While FCC filings hint at earbuds with more power output, the name suggests that Google might be preparing to launch a cheaper version of its earbuds. A supposed image of the earbuds has been spotted with a new color option, and Google later accidentally announced the buds early in a tweet that wasn't taken down quick enough. If the leaks prove to be real, and it looks like they are, the Pixel Buds A-Series could end up being some of the best cheap true wireless earbuds on the market.

And more...

Of course, Google has a vast product portfolio, and it's unlikely that everything will get a spotlight, but many of the announcements and technical sessions could lay the groundwork for features coming to other products. Chrome OS, and Chromecast with Google TV are just some of the other things that are likely to be mentioned in some shape or form. There seems to be a big push for Google Lens lately, which was announced at the 2017 conference, so we'll probably get a look at what the company has in store for its visual AI application. Google has already released the full schedule for the conference, so you can take a look at the various workshops and sessions.

What not to expect

So much focus had been put on Google's next Pixel flagship that we almost forgot that the company still has to (has to?) launch the Google Pixel 5a, at least until images of the device leaked. You'd forgiven if you mistook the device for last year's Google Pixel 5 or even the Google Pixel 4a 5G since the company's recent smartphones have all looked the same. That said, while a possible launch of the Pixel 5a was expected at Google I/O, the company has stated that the device will come later this year, likely along with the Google Pixel 6, while also suggesting that it will only see a limited launch only in the U.S. and Japan.

Rumors of a Pixel Watch have started surfacing yet again. However, according to leaker Jon Prosser, the smartwatch is not expected to launch until later this year and will likely come along with the next Pixel smartphone launch. If he's to be believed, it could prove to be an exciting time for Wear OS enthusiasts.

Derrek is the managing editor of Android Central, helping to guide the site's editorial content and direction to reach and resonate with readers, old and new, who are just as passionate about tech as we are. He's been obsessed with mobile technology since he was 12, when he discovered the Nokia N90, and his love of flip phones and new form factors continues to this day. As a fitness enthusiast, he has always been curious about the intersection of tech and fitness. When he's not working, he's probably working out.