10 things iOS 16 'stole' from Android

There are plenty of new features coming to iOS 16, but how many are "borrowed" from Android?

The dust is still settling from WWDC '22, where various Apple executives and team members took to the stage to show off what's to come. In addition to revealing the Apple M2 chip, new MacBook Air, and Pro models, along with changes to watchOS, we also got a glimpse at what's to come to the iPhone with iOS 16.

In some regards, Apple's ahead of the curve when it comes to various software features, but for the most part, Apple just has a tendency of holding back until years after something was first found on Android. This sentiment rings true when looking at iOS 16, and here are 10 things iOS 16 stole from Android.

Lock Screen widgets

The most obvious and apparent feature that Apple is adopting for iOS 16 is the addition of Lock Screen widgets on iPhone. With updates to Apple's WidgetKit framework, developers can now create widgets that provide quick, glanceable pieces of information right on the Lock Screen.

There are three different sections that can be customized, but widgets can only be placed below the clock. Apple is also limiting the number of options to a total of four widgets, provided that they are all small. But you can mix and match between different widgets, as some like the Calendar app have either a 1x1 or 2x1 widget that can be used.

So how long has Apple been behind the curve? Only about 10 years, as Android 4.2 Jelly Bean introduced the ability to add up to six widgets to the Lock Screen. Unfortunately, the feature wasn't long for this world, as there are plenty of privacy concerns and Lock Screen widgets were removed in Android 5.0 Lollipop. There are some third-party apps that bring this functionality back, but without fidgeting around, we're "stuck" with built-in features like At a Glance.

Something to keep in mind with this new feature, at least if you're a fan of tablets: While the clock font has changed on the iPad, you do not have the ability to add widgets on the iPad's Lock Screen. It's a rather surprising omission, and feels similar to when Apple brought Home Screen widgets to the iPhone first, before bringing them to the iPad.

Always-on Display (rumored)

This one is kind of cheating, as it's not a feature that is available for even the iPhone 13 Pro models with the iOS 16 Developer Beta. But the eagle-eyed team at 9to5Mac found references to new frameworks that refer to an always-on display coming to the iPhone. As we mentioned, this isn't available for existing iPhone models, but recent rumors suggest Apple is set to implement an AOD with the iPhone 14 Pro and iPhone 14 Pro Max when those devices debut later this year.

Get the latest news from Android Central, your trusted companion in the world of Android

Within iOS 16, there are three new frameworks that have been added that relate to backlight management of the iPhone’s display. Backlight management is a key aspect of enabling an always-on feature.

Each of these frameworks includes references to an always-on display capability. Theoretically, you could speculate that these always-on features were added in reference to the existing always-on display features of the Apple Watch, but that’s not the case here.

Funnily enough, the always-on display wasn't even first pioneered on Android, as it was first introduced on the Nokia 6303 before it arrived in 2010 for Nokia phones that used an OLED panel. It really hit its stride with the Nokia Windows Phones, before Samsung took the feature and ran with it on the Galaxy S7 all the way back in 2016. Needless to say, if this actually comes to fruition, it's another long-overdue feature that iOS users will finally enjoy.

There's always a catch with this kind of stuff, as the AOD was originally rumored for the iPhone 13 Pro and iPhone 13 Pro Max with its OLED displays. However, the feature was pulled at the last minute due to concerns about a decrease in battery life.

Live Text

All the way back in 2017, Google introduced a (for the time) all-new image recognition technology during the I/O 2017 Keynote dubbed Google Lens. Since then, Google has continued to implement new changes to the service that make it possible to point your camera at something and extract information.

At I/O 2022, Google also announced a new feature called "scene exploration," which allows you to use the best Android phones to identify multiple products around you. With just a tap, you can learn more about those products, including ratings and being able to filter out some of the results. This is a feature that isn't readily available just yet, but Google says that it's in the pipeline.

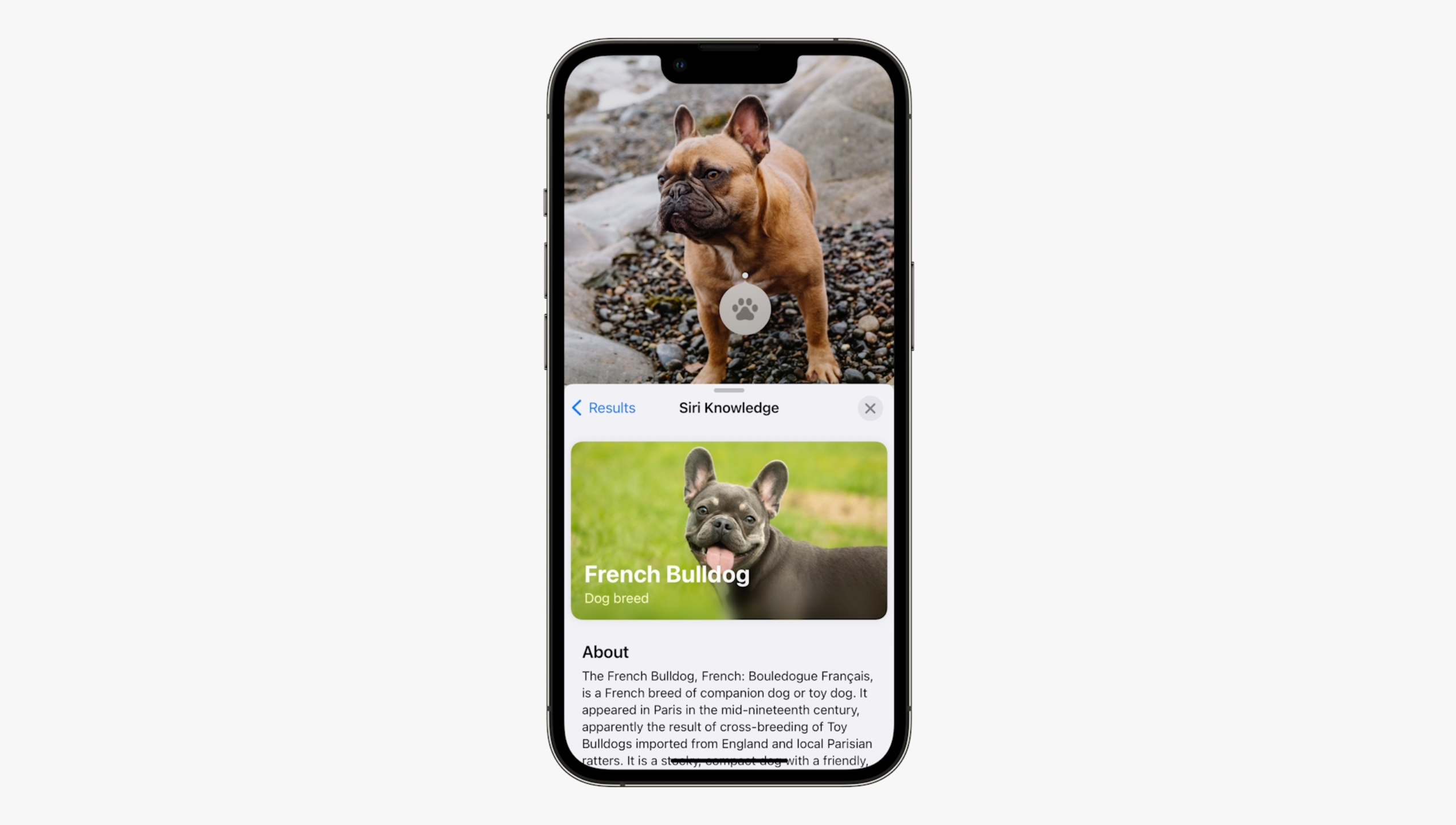

Instead of just working with Google to bring Lens to iPhone users, Apple has developed its own image recognition technology called Live Text and Visual Look Up. This was first introduced at WWDC '21 before making its way into the final version of iOS 15 last fall. Using "on-device intelligence," your iPhone can recognize text and other objects, providing the ability to interact with what's on your screen, including being able to translate a sign while you're traveling in a foreign country.

With iOS 16, Visual Look Up is also getting a bit of love, as the feature is being expanded to recognize the likes of "birds, insects, and statues." One feature that iOS 16 doesn't steal from Android is the ability to tap and hold on a subject within an image, lift it from the background, and then place it in a different app.

Shared Photo Libraries

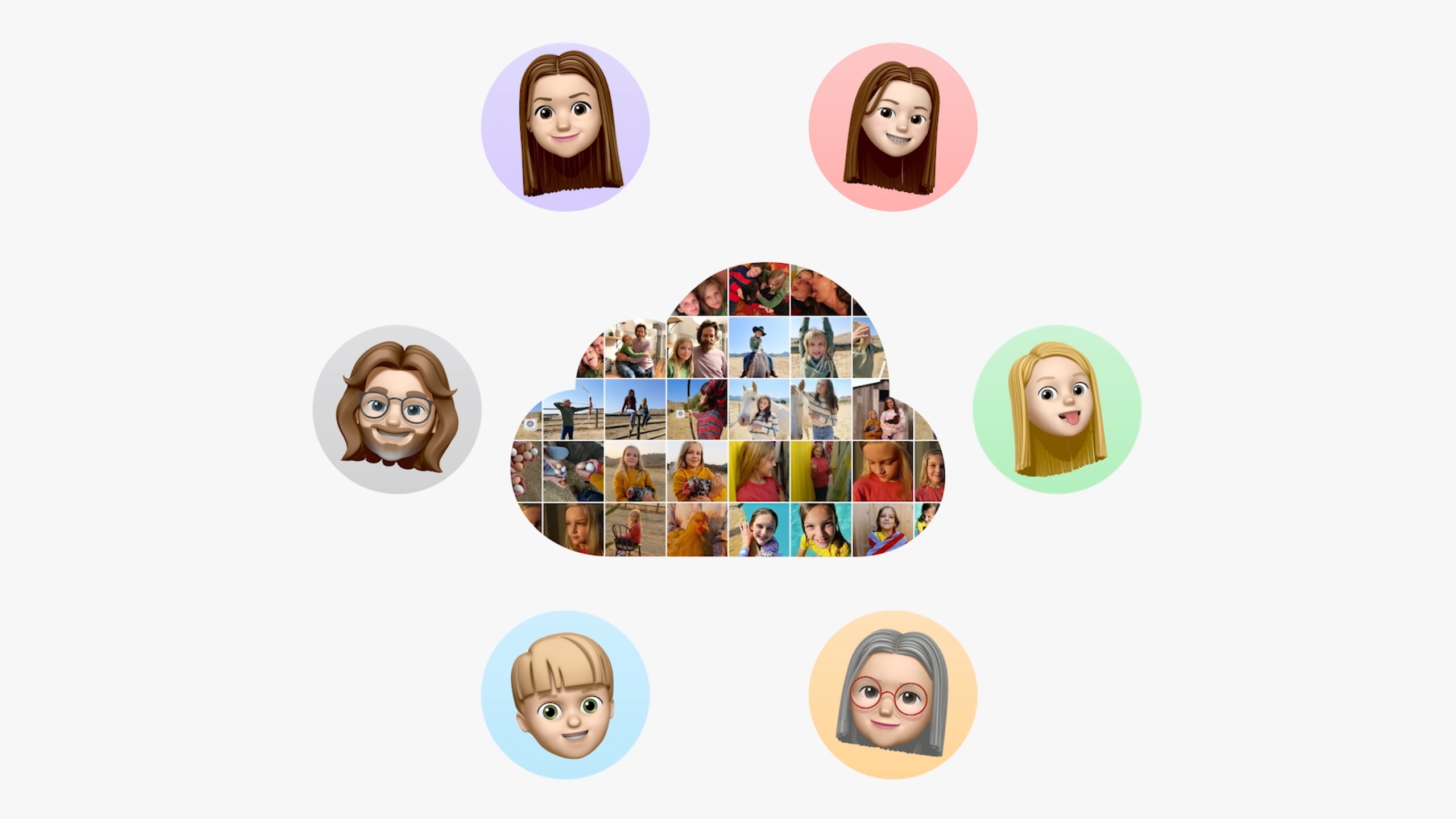

One of the most frustrating aspects of trying to share photos or albums of photos with your friends and family, is that there isn't a single place for you to collaboratively share them. Well, at least for iOS users. Google has had Shared Libraries available since 2017, announced alongside Suggested Sharing with version 3.0 of the Google Photos app. For reference, the most recently released version of Google Photos carries a version number of 5.92, so it's been available for quite a long time.

On the whole, the iCloud Shared Photo Library is essentially the same, but there are some potentially frustrating caveats. The first of which is that you are limited to only being able to share albums with up to five other people. It's great to be able to have a central location where you and your friends or family can share photos and videos. But chances are, you'll likely run into that five-person limit rather quickly. It's not even really a fair comparison, as Google Photos allows for unlimited "contributors" to an album, but there's also a way to set up Partner Sharing with a loved one.

Standalone Fitness app

It's been eight years since the Google Fit app was released to the Android masses, providing users with a single app to log their different workouts and health metrics. For as much love as the Apple Watch gets (for good reason), it's absolutely astounding that the Fitness app has not been available as a standalone app. With iOS 16, the Fitness app will likely be an "oh that's cool, but I'll never use it" kind of feature.

That's simply because iPhone users, regardless of whether they own an Apple Watch or not, likely already have a health and metric tracking app that they rely on. It's kind of like what happened to Google Fit for years, until recently when Google seemingly started caring more about it.

Improvements to Mail

No, we aren't talking about Elon Musk's push for editable tweets. But Apple's built-in Mail client for iOS pales in comparison to almost every other email app on mobile. Even the macOS version of Mail.app is an entirely different experience compared to the one found on your iPhone. However, Apple is bringing some long-awaited features to its mobile email apps, such as the ability to Remind Later, Follow Up, Schedule, and Undo Send.

All of these are features that have been available in Google's Gmail mobile and desktop app for years, even if some of them originated in the gone, but not forgotten, Inbox app. Nudge, Snooze, and Undo Send has been around since 2018, with the ability to schedule emails landing in 2019. To be frank, we're happy to see new features come to the iOS client, if not only because most third-party email apps are frustrating or are missing something.

Creating Map routes with multiple stops

Apple Maps arrived in September of 2012, and has since come quite a long way from the old days. You no longer need to worry about Apple's Maps app guiding you down the wrong way on a one-way road. And the overall information provided is much more robust than what you might think (if you haven't used it in a while). But it's mind-boggling to think that Maps still does not have the ability to add multiple stops to your route.

This is something that Google added to Maps on both iOS and Android back in 2016, making it much easier to plan out your trips. It's why some iOS users just prefer to rely on Google Maps, as it offers more than just taking you from point A to point B.

Proper app-windowing on tablets

As an avid fan of the iPad Pro, and someone who regularly poo-poos on the Android tablet experience, part of my perception was changed following my time with the Galaxy Tab S8 Ultra. It made me realize that Apple's iPad Pro and iPadOS really needed proper app windowing. Obviously, this has been a feature available on Android tablets for years, and that has even been expanded to the best foldable phones with their iPad Mini-like screen size.

Ignoring the awkward name, Stage Manager not only brings a form of app-windowing to the iPad, but also provides much better support for plugging into a monitor. It's not a full desktop-like replacement, as we've seen with the likes of Samsung DeX, but it is something for the iPad users out there. But in true Apple fashion, it's locked behind a wall, as only those who have an M1-powered iPad will be able to use it when iPadOS 16 lands this fall.

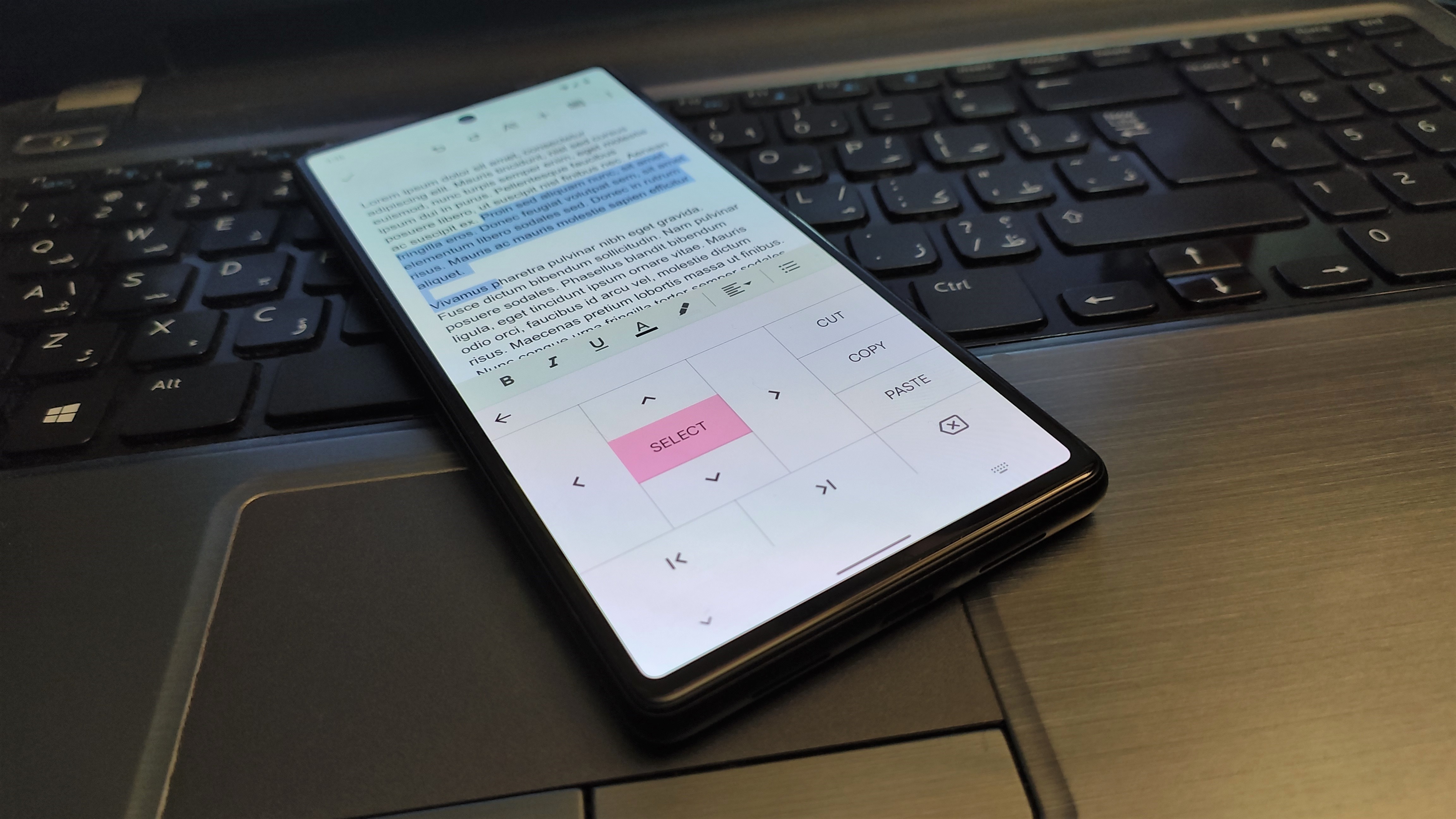

Haptic feedback on the built-in Keyboard app

Haptic feedback when typing on your phone is one of those things that you're either strongly for, or strongly against. Some people turn off the ability when they go through the setup process for a new phone, while others want as much haptic feedback as possible. For one reason or another, and despite arguably having the best haptic engine in a smartphone, haptic feedback when typing on the stock iOS keyboard has not been available.

Back in the day (circa the early days of iPhone), there were jailbreak tweaks that would enable haptic feedback for anyone who wanted it. But that still required you to jump through a bunch of hoops, just to feel your phone buzz when tapped the keyboard. Thankfully, iOS 16 brings the option to enable haptic feedback through a toggle in the Accessibility settings. It's not enabled by default, but at least it's finally here.

Dictation powered by on-device Machine Learning

One of the latest buzzwords to hit the smartphone world is "Machine Learning." By its definition, Google provides the following:

Machine learning is a subset of artificial intelligence that enables a system to autonomously learn and improve using neural networks and deep learning, without being explicitly programmed, by feeding it large amounts of data.

It's partially why Google's Tensor chip was so highly anticipated, as your smartphone can learn from how you use it, creating a better and personalized experience. Dictation has long been available on our various devices, but Google ramped things up with the Pixel 6 and 6 Pro. No longer would you need to dictate punctuation, or stop to try and manually find the right emoji to add. Just hit the button, start talking, and let your phone do all the work for you.

iOS 16 introduces the same functionality for iPhone users, offering a "new on-device experience that allows users to fluidly move between voice and touch." So not only will you be able to switch between your voice and typing something out, but Apple is also bringing some of Google's most recent features to the masses. What remains to be seen is whether Apple will limit the availability of these features to specific devices once iOS 16 is officially released, or if it will be available for any iPhone that can run the latest platform version.

Is Apple's iOS closing the gap to Android?

The answer to the question posed is a pretty obvious one. Apple is closing the gap between iOS and Android. It's easy to sit and nitpick about a bunch of features that Android has had for years, but the truth remains that additional features are almost never a bad thing. At least in the sense that deciding between an Android phone and an iPhone shouldn't be a make-or-break decision.

There are plenty of people out there, myself included, that use both platforms on a daily basis, just because we like having options. What remains to be seen is whether Apple will go through the same growing pains that Android has had to. One example is the introduction of Lock Screen widgets, which landed in Android 4.2, but were removed in Android 5 in order to make way for an Always-on Display to be available.

Apple still has a lot of ground to gain in some regards when compared to Android. But there are other areas, like my beloved topic of "ecosystem," where Google still falls short. All-in-all, we're happy to see these changes come to iPhone users, even if it means that we're losing reasons to complain about when new features are added to iOS in the future.

Andrew Myrick is a Senior Editor at Android Central. He enjoys everything to do with technology, including tablets, smartphones, and everything in between. Perhaps his favorite past-time is collecting different headphones, even if they all end up in the same drawer.