Google Photos and Maps are getting big AI boosts

What will Photos and Maps be able to do next? With generative AI, the possibilities seem to be endless.

What you need to know

- Google Photos and Maps are picking up new AI powers.

- Maps is letting you preview your routes in 3D before you head out using its Immersive View feature.

- Meanwhile, Photos is getting a new Magic Editor tool that makes otherwise complex edits a lot easier you may no longer need Photoshop.

At its I/O conference today, Google unveiled exciting new artificial intelligence capabilities being added to Google Maps and Photos, namely the Immersive View and Magic Editor features, which all rely on generative AI.

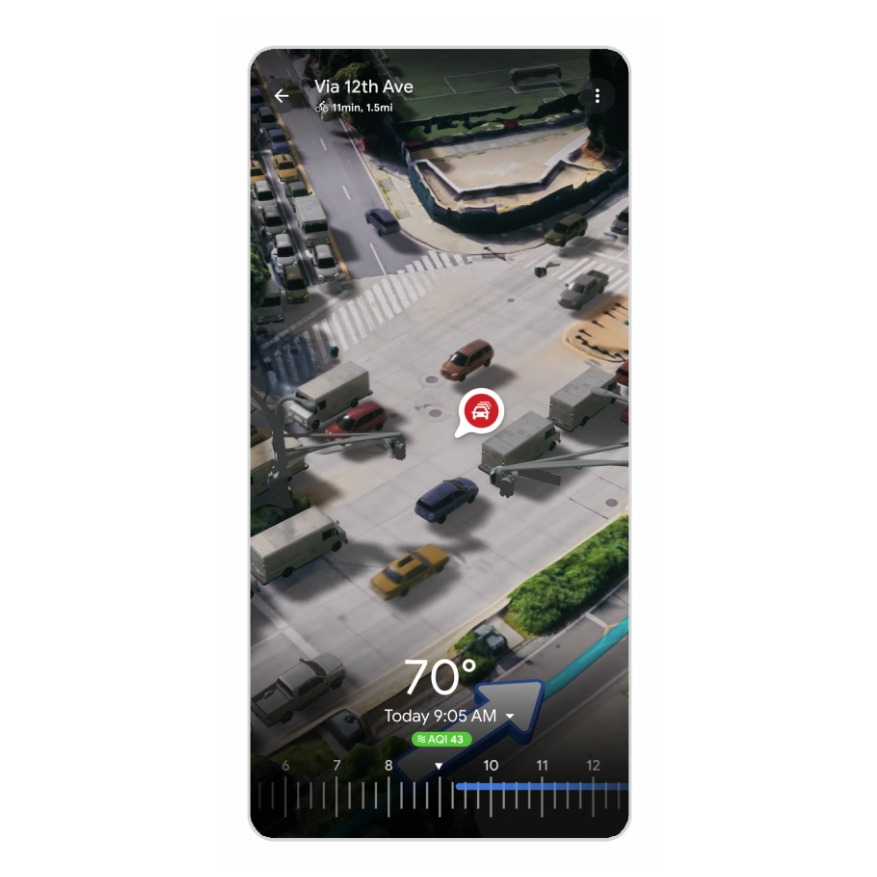

The feature first appeared on Maps for some users earlier this year, creating a multidimensional experience through cities by fusing billions of Street Views and aerial images together. Google brings that feature to your routes whenever applicable, allowing you to virtually fly overhead and scope out local areas, or drop down to street level to get a closer look at interesting objects you'd like to view in detail.

Using computer vision and AI, Immersive View lets you preview every part of the trip before you head out. The generative AI-powered feature creates a detailed model of the area you're visiting, so you have a better understanding of your route in one go.

The result is an interactive experience that lets you preview bike lanes, sidewalks, intersections, and parking areas you may encounter along the way. You can also check the air quality information and visualize weather changes throughout the day by scrolling through a time slider found at the bottom of the app.

Maps also aims to help you plan your trip before going out during rush hour and avoid getting stuck in heavy traffic by displaying a simulation of running cars on the road at any given time.

Google will roll out Immersive View for routes in the coming months in Amsterdam, Berlin, Dublin, Florence, Las Vegas, London, Los Angeles, New York, Miami, Paris, Seattle, San Francisco, San Jose, Tokyo, and Venice.

Google Photos' new Magic Editor

Google Photos is also picking up a new AI boost of its own, called Magic Editor. In a nutshell, it allows you to move a person in a photo and fill in the gaps after repositioning the subject.

Get the latest news from Android Central, your trusted companion in the world of Android

According to the search giant, the new feature "will make complex edits easy." In typical Google fashion, the feature will initially be available on select Pixel phones later this year. Eventually, the tool will be launched on the rest of Android devices in the future.

Magic Editor relies on generative AI to help you apply edits to specific portions of a photo. In the sample shown by Google, you could adjust the composition of your photo by moving a subject to the best spot in the frame.

Since repositioning a subject leaves some gaps, Magic Editor also allows you to generate new parts in order to fill in the gaps. In Google's example, a boy sitting on a bench holding a bunch of balloons was shifted to the center of the frame. The tool then generates a digital extension of the bench as well as balloons on the left to fill in the gaps.

"While this new, experimental technology will open up exciting editing possibilities, we know there might be times when the result isn’t exactly what you imagined," Google noted in a blog post.

As part of the experiment, Mountain View will solicit feedback from early adopters to improve the feature over time.

Jay Bonggolto always keeps a nose for news. He has been writing about consumer tech and apps for as long as he can remember, and he has used a variety of Android phones since falling in love with Jelly Bean. Send him a direct message via X or LinkedIn.