This cool Light Stage is used to train Portrait Light on Google Pixel phones

What you need to know

- Portrait Light is a feature that was announced alongside newer Pixel phones.

- Google set up a light rig to demonstrate various lighting angles.

- Machine Learning utilized information from a large data set to learn how to emulate various lighting conditions.

Google Pixel smartphones may not have the most powerful or versatile cameras in terms of hardware, but they more than make up for it in their use of software. Google uses computational AI to help boost images, making Pixels some of the best point-and-shoot smartphone cameras on the market. The Pixel 4a 5G and Pixel 5 both upped the ante, particularly with the addition of the new Portrait Light feature.

Google details in a blog post how Portrait Light allows the user to adjust the directional lighting of an image after the photo was taken. It's a feature that's automatically applied to photos of people, including Night Sight shots. It's similar to a studio light feature on the LG G8, which relied more on the hardware of the Time-of-Flight sensor of the selfie camera. Google's approach for the Pixels is entirely software-based, relying on models and machine learning.

Google engineers first had to train their model by presenting it with various examples of photos with different light intensities and directions. Even the model's head pose was taken into consideration, which allowed their model to learn about how light is applied to different faces and in different conditions.

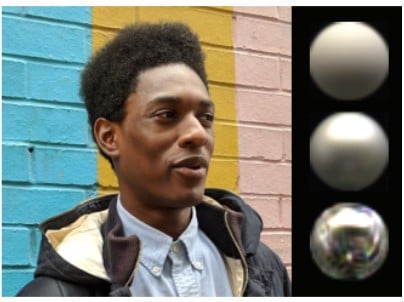

Taking these variables into account, the model would render spheres based on the illumination profile of a photograph, including color, intensity, and directionality. Then by applying common lighting practices in photography such as key light and fill light, engineers were able to determine where to best apply synthetic lighting within an image. But that was just the first step.

Google's engineers also had to demonstrate these different synthetic lighting sources for the model to replicate. In order to do so, they utilized a Light Stage, which is a spherical rig equipped with over 300 LED lights and more than 60 cameras all placed at different angles. By capturing different people with different genders, skin tones, face shapes, and other variables, Google's engineers were able to model hundreds of different lighting conditions by capturing a different photo per individual light. Without getting into the nitty-gritty, Google was able to use the information from the Light Stage and apply it to different simulated environments.

When applied to the Portrait Light feature, it was important that the relighting effect be as efficient as possible. Portrait Light creates a light visibility map and uses this to help construct a low-quality version of the image when adjusting the lighting. Once selected, the algorithm is then upscaled and applied to the original high-quality image. This allows the model to run smoothly on your device, taking up very little space at just under 10MB.

The depths to which Google continuously improves its Pixel camera algorithm are quite impressive. Portrait Light is automatically applied to photos taken on the Google Pixel 4 and newer, and also works for pre-existing portrait photos taken on older Pixel smartphones like the Pixel 2 and up. Jump into Google Photos and see how you can improve the look of your portrait photos!

Get the latest news from Android Central, your trusted companion in the world of Android

Have you listened to this week's Android Central Podcast?

Every week, the Android Central Podcast brings you the latest tech news, analysis and hot takes, with familiar co-hosts and special guests.

Derrek is the managing editor of Android Central, helping to guide the site's editorial content and direction to reach and resonate with readers, old and new, who are just as passionate about tech as we are. He's been obsessed with mobile technology since he was 12, when he discovered the Nokia N90, and his love of flip phones and new form factors continues to this day. As a fitness enthusiast, he has always been curious about the intersection of tech and fitness. When he's not working, he's probably working out.