YouTube Premium: Everything you need to know

Why the ad-removing service is (or isn't) worth subscribing to, and how it relates to YouTube Music and YouTube TV.

Anyone who uses YouTube regularly likely knows about (and has been tempted by) YouTube Premium. The successor to YouTube Red, YouTube Premium gives you access to ad-free videos, downloads, background play, and other perks.

Basically, it's your best way to avoid all those annoying YouTube ads; it used to give you access to exclusive YouTube Originals, but Google no longer creates those, and all that old content is now free to watch.

So, is YouTube Premium worth paying for? Are there other benefits to a subscription besides ads, such as YouTube Music? And what is YouTube Premium Lite? Premium already has more than 100 million subscribers; here's what you need to know to decide whether or not to join them.

What's included in YouTube Premium?

As mentioned above, YouTube Premium comes with a load of goodies that make its monthly fee well worth the asking price.

- Ad-free videos

- Play videos in the background

- Download videos for offline use

- Special "listening controls" to play music in Premium

- Occasional free or discounted Google hardware and software

Google has given away a free Home Mini, Nest Mini, and Stadia Premiere Edition to its Premium subscribers in the past few years, as well as Google Store discounts on other products. New subscribers won't get any of the old giveaways, but you may get future Google hardware as a perk.

Google also tests cutting-edge new features on Premium, offering them to paying subscribers first. For example, Premium offered 4K YouTube videos first before it eventually came to the full free app. The same goes for picture-in-picture mode, which used to be a paid exclusive but came to everyone else about a year later.

Last but certainly not least, a YouTube Premium subscription also gives you full access to YouTube Music. With a YouTube Premium plan, you can use YouTube Music to listen to music without ads, let your tunes play in the background, and download songs/playlists for offline listening.

Get the latest news from Android Central, your trusted companion in the world of Android

How much does it cost?

That's all fine and dandy, but how much will you be paying for all this?

YouTube Premium costs $14/month, and you can cancel your plan at any time. But if you're mainly interested in YouTube Music, that costs $11/month. So, depending on your perspective, if you were going to pay for Google's music streaming service anyway, then you'd pay just $3 extra per month for ad-free YouTube.

If you don't care about YouTube Music, then YouTube Premium may feel overpriced for you, however.

We'll also note that you can sometimes get YouTube Premium for free with other services. For example, Google Fi Unlimited Plus subscribers get six months of YouTube Premium thanks to an ongoing promotion, and Google used to offer a longer Premium trial to YouTube TV members. You'll often find that new Google or Samsung devices come with extended Premium trials, too. On its own, YouTube Premium comes with a one-month free trial.

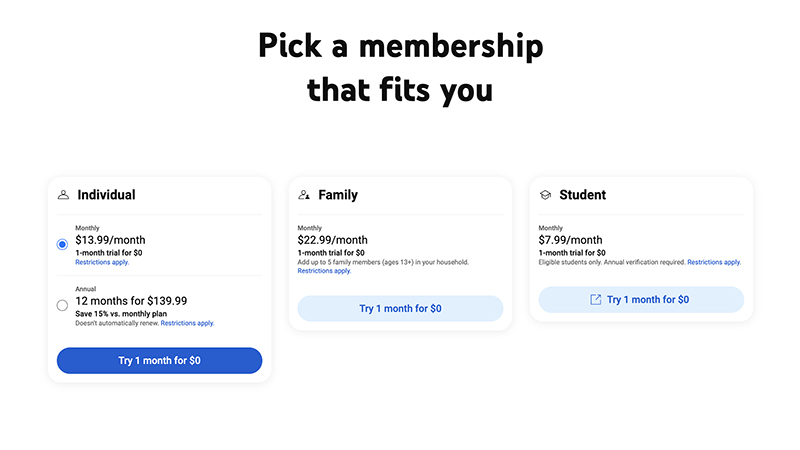

- Individual • Monthly: $13.99/month

- Individual • Annually (does not automatically renew): $139.99/year

- Family (up to five users) • $22.99/month

- Student • Monthly (annual verification required): $7.99/month

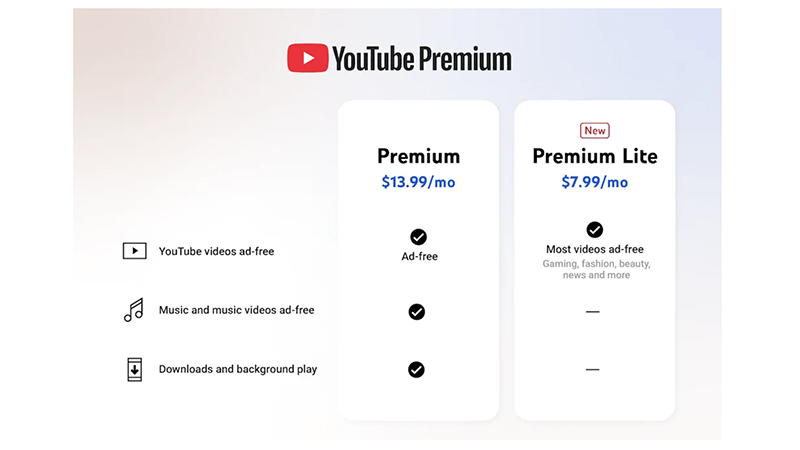

What to know about YouTube Premium Lite

Want to save a few bucks? YouTube Premium Lite is a new option that shaves quite a bit off the price of the subscription price with one major concession to justify the discount: ads.

First, while most videos will remain ad-free, including those involving gaming, fashion, beauty, news, and more, you will see ads in music content, including music and music videos, as well as in Shorts and while searching or browsing. You also won't get access to background play nor the ability to download content for offline viewing.

If you can deal with the occasional ad, YouTube Premium Lite is a much smaller price of entry. It's ideal for those who want the access but might not use YouTube Premium that often. If you notice it gets annoying, however, when trying to listen to tunes or watch Shorts and enduring ads, you can always upgrade.

- YouTube Premium Lite • Monthly: $7.99/month

Where is YouTube Premium available?

YouTube Premium Lite is currently being piloted in the U.S., Germany, Thailand, and Australia. If you want the full ad-free experience, at this time, you can sign up for YouTube Premium in the following countries:

- Algeria

- American Samoa

- Argentina

- Aruba

- Australia

- Austria

- Azerbaijan

- Bahrain

- Bangladesh

- Belarus

- Belgium

- Bermuda

- Bolivia

- Bosnia & Herzegovina

- Brazil

- Bulgaria

- Cambodia

- Canada

- Cayman Islands

- Chile

- Colombia

- Costa Rica

- Croatia

- Cyprus

- Czech Republic

- Denmark

- Dominican Republic

- Ecuador

- Egypt

- El Salvador

- Estonia

- Finland

- France

- French Guyana

- French Polynesia

- Germany

- Ghana

- Greece

- Guadeloupe

- Guam

- Guatemala

- Honduras

- Hong Kong

- Hungary

- Iceland

- India

- Indonesia

- Iraq

- Ireland

- Israel

- Italy

- Jamaica

- Japan

- Jordan

- Kazakhstan

- Kenya

- Kuwait

- Laos

- Latvia

- Lebanon

- Libya

- Liechtenstein

- Lithuania

- Luxembourg

- Malaysia

- Malta

- Mexico

- Morocco

- Nepal

- Netherlands

- New Zealand

- Nicaragua

- Nigeria

- North Macedonia

- Northern Mariana Islands

- Norway

- Oman

- Pakistan

- Panama

- Papua New Guinea

- Paraguay

- Peru

- Philippines

- Poland

- Portugal

- Puerto Rico

- Qatar

- Reunion

- Romania

- Russia ("temporarily unavailable")

- Saudi Arabia

- Senegal

- Serbia

- Singapore

- Slovakia

- Slovenia

- South Africa

- South Korea (paid membership only)

- Spain

- Sri Lanka

- Sweden

- Switzerland

- Taiwan

- Tanzania

- Thailand

- Tunisia

- Turkey

- Turks and Caicos Islands

- U.S. Virgin Islands

- Uganda

- Ukraine

- United Arab Emirates

- United Kingdom

- United States

- Uruguay

- Venezuela

- Vietnam

- Yemen

- Zimbabwe

YouTube Premium vs. YouTube TV

YouTube TV is Google's answer to cable. With it, you get access to over 100 live channels on practically any streaming device or web browser. After your initial discounted rate, you'll pay $84/month, along with an extra $10/month for 4K and additional fees for premium packages and extra channels. You can try it for 10 days for free.

While YouTube TV doesn't include Premium, if you do subscribe to both, you'll see your ad-free YouTube content on YouTube TV. Otherwise, you'll have to accept those ads in spite of paying a premium for Google's cable alternative.

YouTube Premium: Starting at $13.99/month with the first month free.

The best way to expand your YouTube experience. For the price of one lunch each month, YouTube Premium removes ads from all videos, allows you to save videos for offline viewing, and grants you access to YouTube Music Premium and YouTube Originals. It's the best YouTube experience you can get.

Michael is Android Central's resident expert on wearables and fitness. Before joining Android Central, he freelanced for years at Techradar, Wareable, Windows Central, and Digital Trends. Channeling his love of running, he established himself as an expert on fitness watches, testing and reviewing models from Garmin, Fitbit, Samsung, Apple, COROS, Polar, Amazfit, Suunto, and more.

- Namerah Saud FatmiSenior Editor — Accessories

- Christine PersaudContributor

You must confirm your public display name before commenting

Please logout and then login again, you will then be prompted to enter your display name.