Here's what the Pixel 4 sees when you wave your hand in front of it

What you need to know

- Google's AI team this week took to blogging about how they implemented Motion Sense in the Pixel 4.

- The technology underpinning the feature is called Soli, a short-range radar sensor.

- In addition, Google used the power of AI and machine learning to allow the phone to understand a user's gestures and movements.

One of the Pixel 4's most exciting innovations is Motion Sense, which allows you to interact with the phone using only hand gestures, all without touching the phone at all. Google's AI blog this week documented the technologies and processes its engineers used to bring the feature to market.

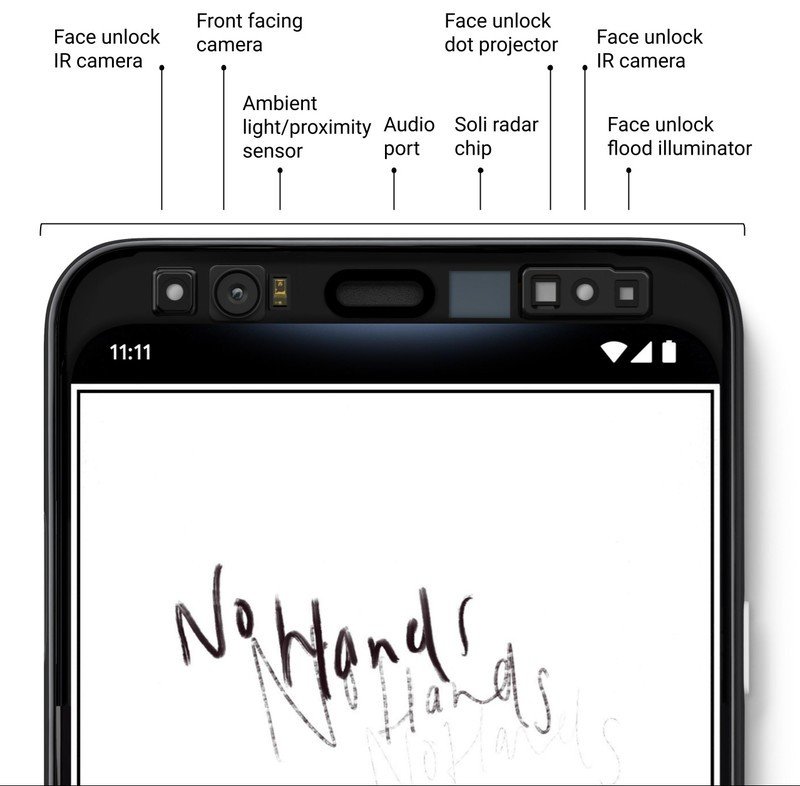

As the company's Jaime Lien and Nicholas Gillian explain, powering Motion Sense is a short-range radar sensing system that Google calls Soli. And while radar itself has existed in some form for more than a century, what makes the Pixel 4 unique is that it's the only example of such a system being integrated into a consumer device.

How Soli works is as follows: the radar module emits radio waves that are then reflected off of surrounding objects and back to the sensor. Using the properties of the reflected waves, the module can detect things like distance and size.

The most interesting thing about Soli, though, is the fact that Google's custom algorithms allow for the Soli module to perceive motion around the phone without actually generating a detailed image of the objects around it. Not only is that good for privacy — because your face, for example, is not captured — but it's also what allowed Google to shrink the whole thing to fit in the top of a phone.

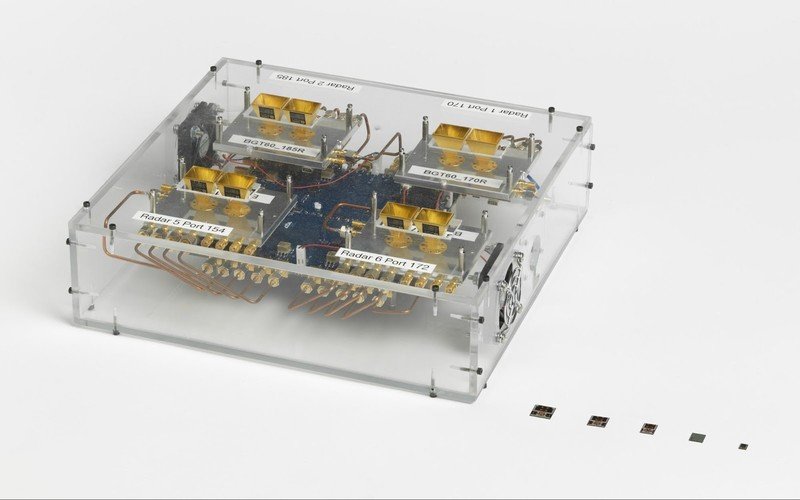

Here's a look at what that process looked like, as the company iteratively shrunk the desktop-sized prototype to its current size:

Reducing the system's size so that it could fit in a phone wasn't the only challenge the team faced. They also developed new techniques to deal with the signal deterioration from audio signals near the Soli radar. Those innovations are why you can continue using Motion Sense even while listening to music.

The end result is seen below, which gives a visual representation of what Soli "sees":

Get the latest news from Android Central, your trusted companion in the world of Android

Note that the axes are not actually 2-dimensional space. Instead, they represent the radial distance from the sensor, as well as the velocity of approach toward the phone, but I'll leave those technical details for the actual experts. You can read the blog post in full here, and if you're academically curious, also check out this research article by the team behind Soli.

Lien and Gillian end the post by promising to continue working on exploring novel applications for a miniature radar system in a phone, and I, for one, can't wait to find out what they come up with next!