Google's accessibility app uses AI to give users a voice with just a glance

What you need to know

- Speach therapist Richard Cave worked with a team at Google on an experimental app designed for persons with disabilities.

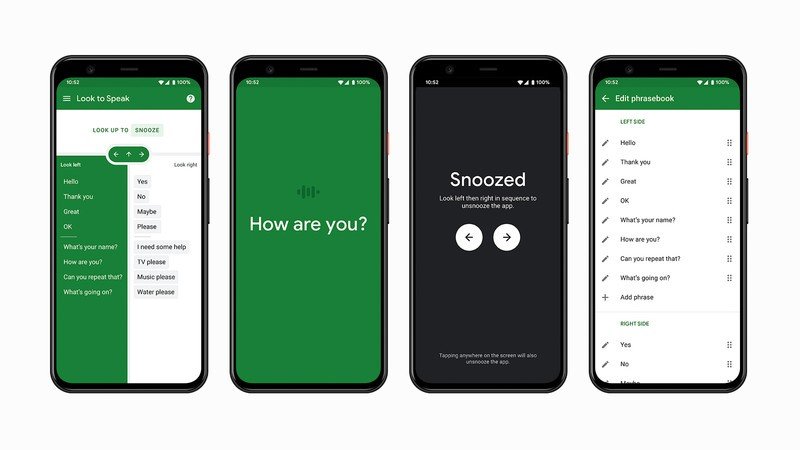

- Look to Speak lets users communicate by using their eyes to select phrases that their phone will speak aloud.

- The app is designed to supplement, rather than replace, existing systems that use gaze to control communication.

Smartphones are extremely useful and are getting smarter and faster every day. But despite that, they can still pose difficult to navigate for users with speech or motor impairments. Recently Google pushed an update to its Voice Access feature that improves the interface and makes it easier to navigate with just the sound of your voice. But for users who may not be able to speak or physically interact with their device, a small team at Google have come up with a solution.

With the help of machine learning and the expertise of speech and language therapist, Richard Cave, the team has designed a new feature called Look to Speak that can help users communicate easier with their smartphones. The feature, which is part of Experiments with Google, lets users select various phrases by looking left or right. Depending on where a user looks, the responses will be narrowed down until the correct phrase is left. Users confirm their selections by looking off-screen or take a break by glancing up. Once a phrase is selected, the phone will read it aloud.

The feature is straightforward and quite intuitive. It relies on the smartphone's ability to detect where a user is looking, something that happens entirely on-device to protect privacy. The only aspect of the system that still requires physically interacting with the smartphone is when a user wants to change settings like personalizing phrases and adjusting eye gaze sensitivity. This would likely still depend on assistance from an able-bodied aide.

"Look To Speak could work where other communication devices couldn't easily go"

According to team member Zedebee Pedersen, Access was a big focus when developing this feature, and the team was focused on "creating simple interfaces for smaller devices like smartphones, that don't rely on external pieces of hardware...". Putting a system like this on a smartphone gives non-verbal users the opportunity to better communicate in a number of situations where it would otherwise be too difficult, such as outdoors or on public transportation. And with the rise of cheap, yet powerful smartphones this year, having a large selection of low-cost options makes having access to a feature like this even easier. But Richard Cave sees Look to Speak as more of a companion to larger dedicated systems.

"We're not replacing all this kind of heavy dude aid stuff because there's a lot of functionality in there. Look to Speak is for those important short messages where the other communication device can't go."

To develop a system like Look to Speak, it's important to get real user input, so Cave and the team at Google worked with various communities and individuals on testing the app, including an artist named Sarah who uses an eye gaze system to communicate. The video showcases how the user would set up the system, which requires the user to line up their face to a section highlighted on the screen so that the system can take an accurate reading of their face. Using the system, Sarah was able to successfully use the smartphone to speak the phrase "My name is Sarah" and "What is going on?". A second user was shown using Look to Speak, and after the instructor prompted to give it a try, the user successfully responded with "Let's do it."

Look to Speak is available for devices running Android 9.0 and up. Those interested can find more information or try out the application at g.co/looktospeak. Check out the video below to see how Sarah helped the team test Look to Speak.

Get the latest news from Android Central, your trusted companion in the world of Android

Derrek is the managing editor of Android Central, helping to guide the site's editorial content and direction to reach and resonate with readers, old and new, who are just as passionate about tech as we are. He's been obsessed with mobile technology since he was 12, when he discovered the Nokia N90, and his love of flip phones and new form factors continues to this day. As a fitness enthusiast, he has always been curious about the intersection of tech and fitness. When he's not working, he's probably working out.