Google uses AI to turn Billie Eilish's 'Bad Guy' into an endless video

What you need to know

- Billie Eilish recently celebrated 1 billion views on YouTube.

- Google uses TensorFlow machine learning to create an endless video consisting of over 15,000 fan covers.

- Viewers can control the kinds of videos they're presented with or just let the video player choose for them.

I admit it: before today, I had never heard a Billie Eilish song. Maybe a snippet of a song in a commercial, but besides that I'd largely managed to evade the nonchalant vocal stylings of "Bad Guy". But thanks to Google's new machine learning experiment, I just can't seem to escape it.

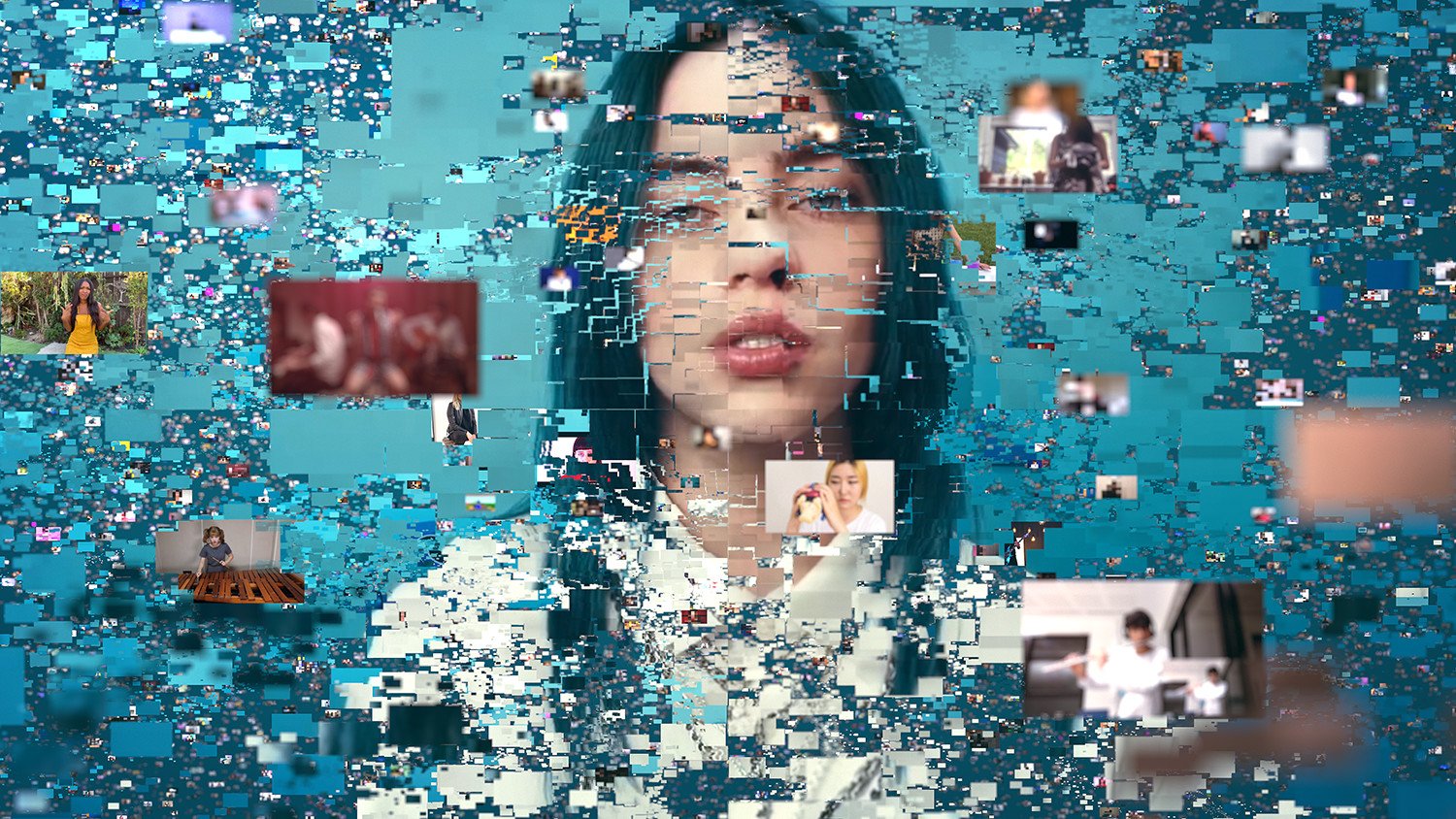

The song broke the previous record for longest running #1 on the Billboard Hot 100, and the music video managed to amass a whopping 1 billion views. So to celebrate, YouTube and Google Creative Labs created an infinite music video with the help of machine learning and a collection of over 15,000 covers of the song found throughout YouTube.

Tens of thousands of YouTube creators have covered Billie Eilish's "Bad Guy." What if those fans could play together? Machine learning keeps all the covers on the same beat and lets you jump from video to video seamlessly. With endless possible combinations, every play is unique and never the same twice.

Once the Infinite Bad Guy page is loaded up, the video starts in the center and other would materialize on either side of it. It's fairly random, and if you click on a video, a new set of companion videos will materialize. For instance, clicking a cover that featured a video game brought up another video with a similar game.

Viewers can further control what kind of videos they're presented with by selecting from the row of hashtags below the player, or clicking the arrow at the bottom to display the entire list of hashtags. Or just completely surrender to the song and select "auto play" which would just cycle through seemingly random videos.

The experiment is laid out a little differently on mobile browsers, but it functions the same way, nonetheless. It's all fairly seamless, too, minus some slight lag when loading up a new video. And once the song ends, it starts right back up again with a new host of videos to present. According to the teaser below, it would take over a googol amount of years to go through all of the combinations:

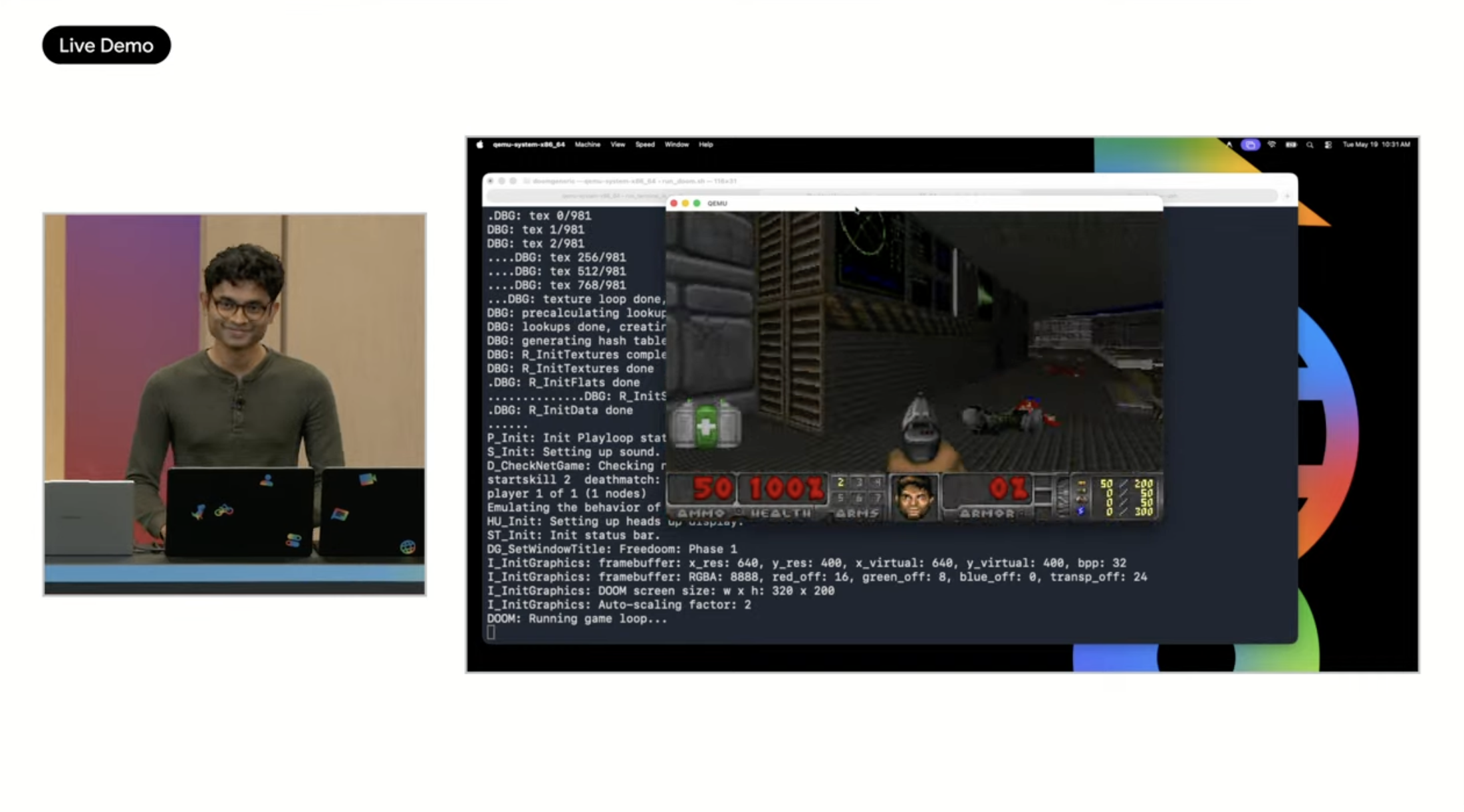

The experiment is powered by TensorFlow, Google's open-source machine learning tool. It was an integral part of Google's Pixel Visual Core image processor, which would helped get more out of its camera hardware. Google seems to have ditched it in this year's new Pixel smartphones, instead making use use of the NPU's present in Qualcomm's chips. Regardless, TensorFlow is still used in a number of Google experiments, all of which you can find on its website.

Get the latest news from Android Central, your trusted companion in the world of Android

Derrek is the managing editor of Android Central, helping to guide the site's editorial content and direction to reach and resonate with readers, old and new, who are just as passionate about tech as we are. He's been obsessed with mobile technology since he was 12, when he discovered the Nokia N90, and his love of flip phones and new form factors continues to this day. As a fitness enthusiast, he has always been curious about the intersection of tech and fitness. When he's not working, he's probably working out.