Google Search will make it easier to find local businesses you don't know about

You can start with a picture or screenshot, and Google will guide you in the right direction.

What you need to know

- Google has announced a new feature that will allow you to search for local information that you are unsure how to find.

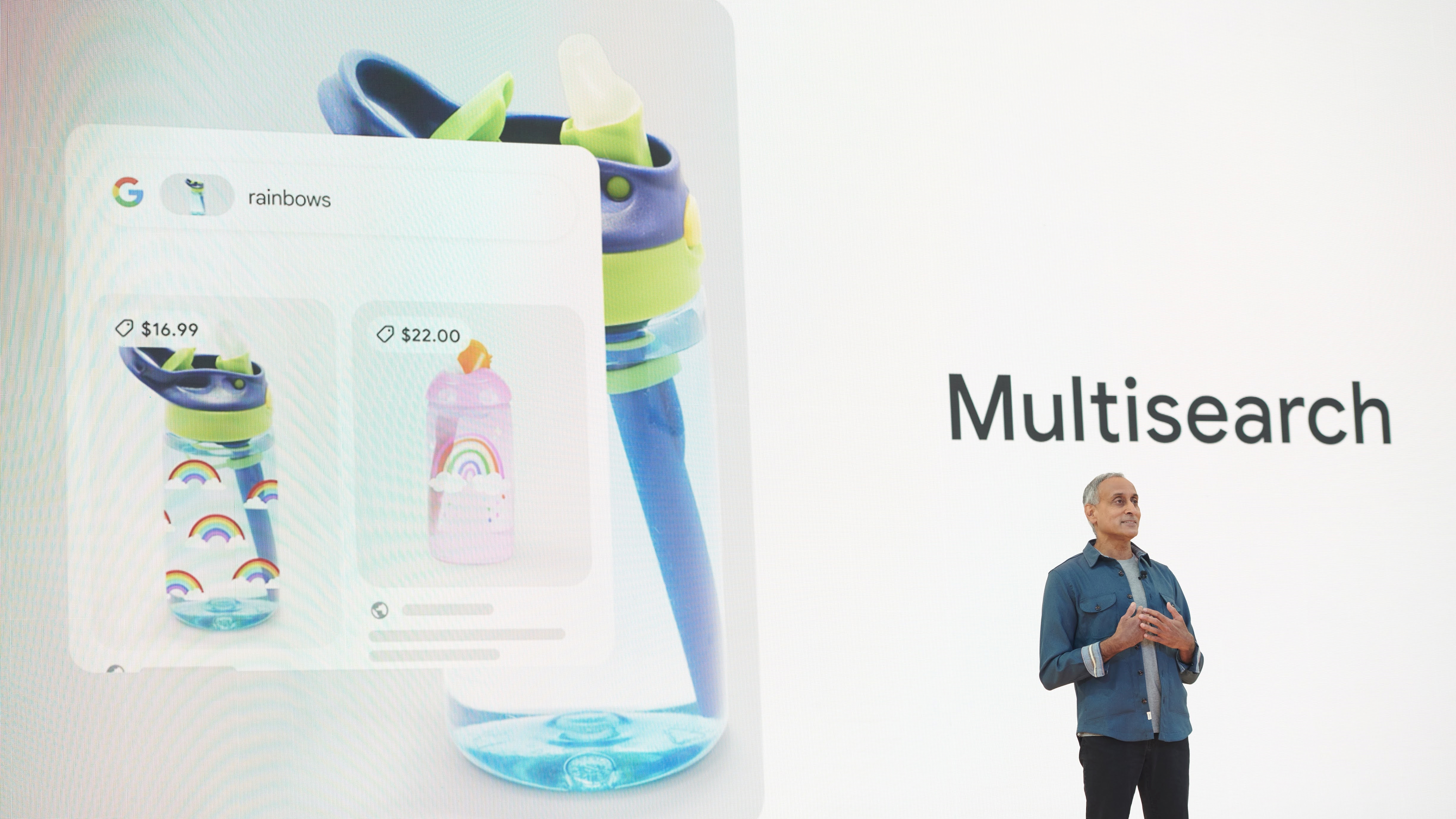

- The new feature expands on the multisearch feature that was introduced in April.

- Google will launch multisearch for local information in English across the globe later this year.

Last month, Google announced multisearch, one of the most significant changes to Search in several years, allowing you to search for things you can't describe with words. While the feature was initially designed for shopping, Google is now making it much more useful for almost anything you want to find on the web.

At its I/O 2022 event today, Google announced that the multisearch function is expanding to local search results. This means you'll soon be able to Google for local information that you don't know how to find using text alone.

The expanded multisearch feature allows you to search for local businesses by image and then add "near me" to see a list of restaurants or retailers nearby, for example. So, the next time you see a dish you want to try but don't know its name, you can take a photo of it with Google Lens and find local restaurants that serve it.

Google does this by scanning "millions of images and reviews posted on web pages." It also sources information from Google Maps contributors and then matches it to your specific search criteria.

Local multisearch will be available globally for English searches later this year. To use the feature, launch the Google app on any of the best Android phones or iOS devices, and then tap the Lens icon on the right side of the search bar to upload a photo or screenshot.

On top of the multisearch improvement, Google has introduced another feature called scene exploration. It still relies on multisearch to let you search for objects using a combination of text and images at the same time. But instead of uploading a picture from your gallery, you can simply "pan your camera and instantly glean insights about multiple objects in a wider scene."

As Google senior vice president Prabhakar Raghavan put it during the event, the function "is like having a supercharged Ctrl+F for the world around you."

Get the latest news from Android Central, your trusted companion in the world of Android

Raghavan gave the example of a nut-free chocolate bar. When you try to find this product in a supermarket, Lens will allow you to scan an entire shelf with your phone camera and see overlays on your screen with helpful insights.

Google's multisearch function is expected to do a lot more than that down the road, allowing you to search for things even if you don't have all the words to describe what you're looking for.

Jay Bonggolto always keeps a nose for news. He has been writing about consumer tech and apps for as long as he can remember, and he has used a variety of Android phones since falling in love with Jelly Bean. Send him a direct message via X or LinkedIn.