Google's smartphone division is in a very interesting, even precarious, position. At once, it's trying to appeal to two disparate ends of the market: the design and experience-focused high-end phone buyer who is typically drawn to the iPhone; and the Google-loving Android enthusiast that wants a very different set of features and desires the "purest" Google experience. The latter comes from years of selling Google-sanctioned Nexus phones that were so often the dream devices of Android diehards, while the former comes from Google's goal to capture the most lucrative and sought-after group of consumers in the market.

The solution, as was the case last year with the canonical Pixels, comes in the form of "one" phone that's actually two — this year, it's the Pixel 2 and Pixel 2 XL. Starting at $649 for the base model Pixel 2 and going up to $949 for the top Pixel 2 XL, these things are costly — and Google thinks it has both the hardware and software chops to make them worth it. A refined emphasis on in-house hardware design and a compelling story about deep integration with Google's bevy of services make the Pixel 2 and 2 XL rather unique among Android phones — and, of course, quite similar to Apple's playbook with the iPhone.

Google's hardware division isn't a project or a hobby anymore. It's the real deal. Let's see if the Pixel 2 and Pixel 2 XL live up to that standard in our full review.

About this review

I am writing this review after six days using the Pixel 2 and Pixel 2 XL. They were used on both the Project Fi and Verizon networks in the greater Seattle, WA area. The software was not updated during the course of our review.

For our video review, Alex Dobie has also been using both the Pixel 2 and Pixel 2 XL for a total of five days in Manchester, UK, and Munich, Germany on the EE and Vodafone networks (roaming on Telekom.de and Vodafone DE while in Germany.) The phones were provided to Android Central for review by Google.

Because of their considerable similarities, we're grouping together both the Pixel 2 and Pixel 2 XL into a single review. The opinions and observations expressed in this review are applicable to both phones, except in specific places where one model is mentioned in particular.

In video form

Pixel 2 and 2 XL Video review

For the full visual take on these new phones from Google, be sure to watch our complete video review put together by our very own Alex Dobie. For the specific details on the pair, you'll want to read our entire written review here.

Keep it simple

Pixel 2 and 2 XL Hardware

2016's HTC-built Pixel and Pixel XL were identical phones simply built at two different scales. This year, despite Google's insistence on branding of the "Pixel 2" as a single phone, things aren't so simple. Sure, from a glance, the Pixel 2 and Pixel 2 XL look like the same phone in two different sizes. But pick them up, and each is clearly unique.

The larger Pixel 2 XL is getting a majority of the attention, and rightfully so.

The Pixel 2 XL is getting a majority of the attention, and I'd say rightfully so. The big 18:9 display, rounded corners and smaller bezels just feel more modern, looking very similar to the LG V30 (wonder why) and Galaxy S8+. In stark contrast to the smaller Pixel 2, the 2 XL's front glass is steeply curved on all sides to flow over the edges and meet the metal sides further down. It feels and looks absolutely fantastic, and the lack of any sharp edges or right angles on the entire front just feels "right."

The problem, from my perspective, is its overall size that will be too big for some to manage. It's basically the same size as the Galaxy S8+ — just under 2 mm shorter, but also over 3 mm wider and the same weight. For another comparison, the Pixel 2 XL is larger (and not just a little bit) in every dimension than the LG V30. It teeters on the edge of being too big to reach across, and is definitely too big to comfortably reach the top quarter of the display when holding it in one hand. Thankfully, the Pixel 2 XL has a flat display that doesn't have accidental palm touch issues, and a fingerprint sensor in a perfect position to reach in any case.

The Pixel 2, on the other hand, harkens back in so many ways to the Nexus 5X — the proportions, the curves, the overall look from the front. Its metal sides come up further and to a sharper beveled edge where they meet front glass, and the glass itself is nearly flat with only a minor amount of "2.5D" curving at the edges. The 16:9 display obviously isn't as tall as the 2 XL, but the bezels on the top and bottom add enough height that the overall proportions are very similar to its larger sibling.

Once you get past the front and how the glass curves, the phones are almost identical.

For all of this typing focused on the differences between the two, there is so much shared in the hardware of the Pixel 2 and 2 XL. Once you get past the front and how the glass curves into the sides, things are as close to identical as possible. The aluminum frame feels thick and finely constructed, with a textured coating that gives you far more grip — albeit at the expense of feeling a bit less like metal than the 2016 Pixels, a compromise I feel is worthwhile. The glass insert at the top of the phones is smaller now and inset perfectly, but now marred by a small camera bump that makes the taller 2 XL wobble on the table a bit when you're tapping the screen.

There isn't much else to say about the design of these phones, particularly when you have them both in black as I do. Like their predecessors, and even more so this time around, the Pixel 2 and 2 XL are monolithic, near-featureless and quite basic in their overall hardware. They don't have the stunning curves, flashy polished metal or distinctive lines of many other phones out there. The best you get here are the offset colored power buttons on "kinda blue" Pixel 2 and "black & white" Pixel 2 XL.

The hardware is clean, efficient and beautiful — but not flashy.

Mercifully, Google has added IP67 water- and dust-resistance to the Pixel 2 and Pixel 2 XL, which is downright table stakes at this point (and some would argue it was last year). Whether directly related or not, this has also coincided with the loss of the headphone jack — which was something Google specifically mentioned as a benefit on the original Pixels. (Ugh. C'mon.) It includes a USB-C to 3.5 mm headphone adapter in the box, and sells extras in the Google Store for $20 $9, but frustratingly doesn't put USB-C headphones in the box. The industry is leaving the 3.5 mm headphone jack behind, I get that — but I really wish Google didn't cheap out here, particularly on the $849 Pixel 2 XL, and chose to include some headphones considering how few people have USB-C headphones right now.

Adding to the frustration is attempting to navigate the world of USB-C adapters and headphones. At this point there's no clear or consistent way to know if when you buy them that they'll actually work with your phone. For example HTC's headphones don't work with the Pixel 2, but its headphone adapter does. And Motorola's adapter doesn't work with Google's phones at all.

A tale of two displays

Alright, back to the differences again — let's talk about displays. Google's biggest selling point on the Pixel 2 XL's display was its color accuracy and the fact that it could reproduce 100% of the DCI-P3 color space. And to my eyes, that's clearly where all of the tuning time went: accuracy above all else. Because this screen, I hate to say, looks a bit dull and washed out. Being used to Samsung's vibrant and colorful displays — which by default exhibit punchier, more saturated colors — the 2 XL is kind of disappointing when you first look at it. No matter how you feel about the colors you'll notice an apparent color shifting when viewing the phone off-axis at all, to the point where holding the phone at an angle the colors at the top of the display (further from you) are more blue/green than what's at the bottom.

The Pixel 2 XL's display is actually disappointing, but the standard Pixel 2 surprises.

The 2880x1440 resolution is plenty high, but the Pixel 2 XL exhibits the same sort of soft grain and grit as the V30 on white backgrounds when scrolling — one of those things you can't un-see once it's been pointed out. It's something we expect to see on super low-end phones, but not anything remotely high-end in the past few years — and it's surely not a problem that Samsung has with its OLED displays nowadays.

Thankfully over time your eyes get used to its calibration, as they do with any other phone, and you start to see some of the benefits compared to last year. The Pixel 2 XL gets much dimmer in low-light situations where you want it to, peak brightness is higher — though it is, of course, not as bright as a Galaxy Note 8 — and daylight visibility improved because of it.

Funny enough, it's the smaller, lower resolution, less-accurate and ostensibly lower-end Pixel 2 display that actually looks better to my eyes. Its brightness (both high and low) is very similar to the 2 XL, but it doesn't exhibit the grain on white backgrounds or the color shifting at angles that are annoying on the larger phone. At the same time, its colors have a bit more punch and depth to them — mostly due to the display just being a tad warmer overall.

I don't think the display quality differences are so big that they alone should make you want to choose one phone over the other. There are other factors like the actual physical size of the screen and the design of the phone that are likely bigger purchase drivers. But it's certainly worth noting that just because the Pixel 2 XL is bigger and more expensive doesn't mean it has the better display.

The best around

Pixel 2 and 2 XL Software and experience

For the vast majority of people out there, the best Android experience comes directly from Google on a Pixel phone. If there's one thing we've seen play out consistently over the years, it's new high-end phones coming out with piles of bells and whistles to appeal to as many people as possible, only to eventually hurt the daily experience because of how they were saddled with all of this superfluous crap. Google's Pixel phones are the exact opposite: in having fewer features and options for customization, they offer a superior daily experience for almost every kind of smartphone user today.

Google has gotten really good at this whole user experience and interface design thing.

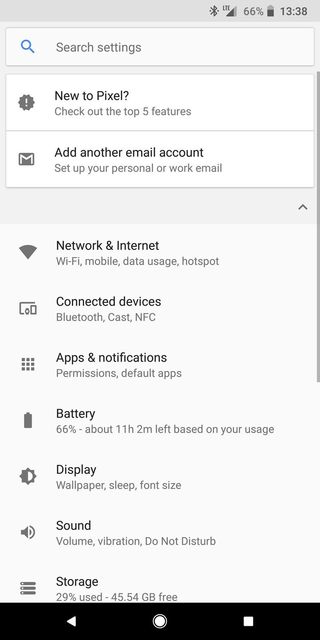

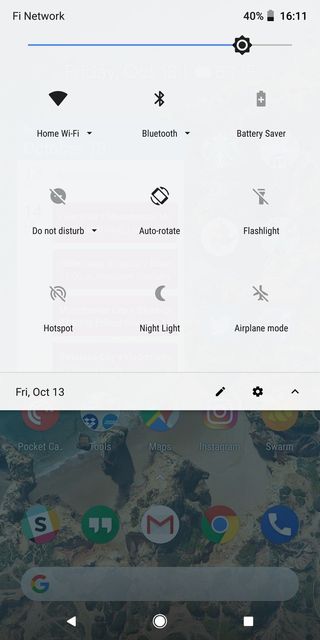

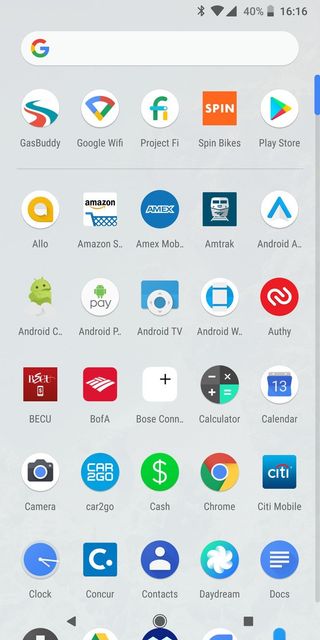

Loading up your Pixel 2 or 2 XL for the first time, you won't be greeted by a super-long setup process, duplicate apps, extra account permissions or clunky backup and restore settings. Google's default apps are some of the best in the business — many of which you'd likely install on any Android phone — so for many people, they won't feel like they have to go hunting for anything from the Play Store from the start. As it turns out, Google has gotten really good at this user experience and interface design stuff — everything just flows and makes sense. Android 8.0 Oreo has a lot of nice features that will be great for any Android user, but it's absolutely fantastic to see it all working as intended by its creators with no additional changes.

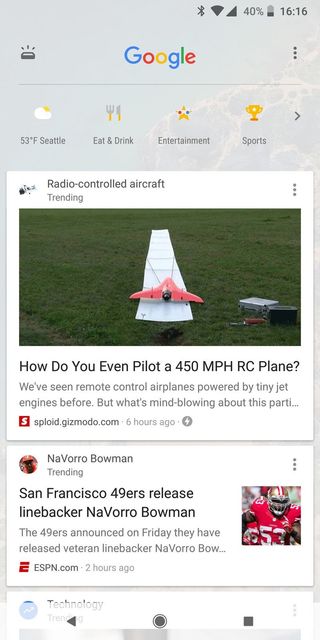

That's not to say that the Pixel 2 and 2 XL give you the same type of empty, spartan experience of old Nexuses. Google has consistently added little features and changes to its software in the last year, but for the most part they are both simple and noninvasive. Just look at the new feature that passively identifies any ambient music and displays it on your lock screen — that isn't something that gets in your way, but it's a neat bit of magic to see when you glance down at your phone on the table. The same goes for Google Assistant being available with a squeeze of the phone, or being able to back up as many photos as you want in their original quality (for three years) to Google Photos. It's all of these "small" things that are both out of your way and hugely impactful to the overall experience of the phone when you add them up.

And of course Google's core competency of having guaranteed update windows for these phones is something that will always differentiate it. With the Pixel 2 and 2 XL it has promised three years of platform and security updates, meaning if you buy one of these you just won't have to worry about having up-to-date software — that's important if you care about that sort of thing, but really important if you're someone who doesn't.

Performance

This is what people who are "in the know" buy a Pixel for: the performance. And not just in terms of synthetic benchmarks, but in real-world "live with this every day" speed that you just don't get in any other Android phone. With the new processor and another year of optimization, Google picks up right where it left off with last year's Pixels. Both of these phones are ridiculously fast, smooth and consistent in everything you do.

People who are 'in the know' buy a Pixel for the performance.

It's something I've obviously come to expect from Google's own phones, but after using other phones that are fast but still have hiccups now and then, it's just so refreshing to have something this consistently good in your hands. The thing about speed like this is that you don't have to be a smartphone nerd to appreciate it. Everyday people who are used to their slightly old and stuttery phone will be blown away by the Pixel 2 and 2 XL.

I figured this is as good a place as any to mention audio performance — namely, how both of these phones come with front-facing stereo speakers. I'm never going to say that the addition of stereo speakers is a fine trade-off for not having a headphone jack, but these speakers are really good. I'd put them right up next to the HTC U11's in terms of quality and volume, which means they both totally blow away the single speaker on phones like the Galaxy S8. The phones obviously aren't big enough for real stereo separation, but having audio coming right at you when watching video is far better than blowing out one end.

Battery life

With a 3520mAh battery and super-efficient Snapdragon 835 processor, the Pixel 2 XL is poised to have really good battery life. And indeed it lives up to expectations. In my first full day out of the gate with the 2 XL it made it through a 16-hour day with 5 hours of "screen on" time when I tossed it on the charger at 5% battery before bed, which was surprisingly good considering how much I used it throughout the day. This was with everything turned on, syncing and notifying me, with the default "living" wallpaper (a clear increase in power drain), auto screen brightness, plenty of podcast listening over Bluetooth, and time spent in the camera. In subsequent days things were even better as I went easier on the phone.

The Pixel 2 XL's battery life is exceptional, and the Pixel 2 is actually a full-day phone as well.

There's plenty of rational concern that the Pixel 2's 2700mAh battery, being 23% smaller, isn't large enough considering it has the same overall specs and capabilities, with the only change being a smaller 1080p display. Thankfully things seem much better than last year's Pixel with its 2770mAh battery. With a more efficient processor, the Pixel 2's battery is actually really solid. Using the Pixel 2 the same way as the 2 XL, It's good for a full day of use — at least 16 or 17 hours, albeit with less than the 5 hours of "screen on" time ... more like 3 to 4 hours instead. But that's just fine for me — it means I don't really have to worry about battery life, even with what is admittedly a really small battery for a 2017 flagship.

New benchmark

Pixel 2 and 2 XL Cameras

Even a year after their release, the Pixel and Pixel XL were easily still some of the best available smartphone cameras. That was due in no small part to Google's excellent photo processing, which paired with camera hardware that lacked the typical assistance of OIS (optical image stabilization) and produced fantastic photos regardless. This year, Google has added OIS, widened the aperture to f/1.8 and improved its processing, with the only downside (if you could call it that) being slightly smaller pixel size on the 12.2MP sensor.

The results are utterly fantastic. Google hasn't strayed from its core philosophy on photography, which is to give you a mostly accurate photo but also crank up the colors and use HDR techniques to give you a beautiful shot. To that point, HDR+ is now on permanently by default, leaving you to jump into the advanced settings to give yourself a toggle to turn it off. But I'm not sure why you would — HDR+ processing is great, and even faster than before.

So this is what happens when you take Google's great photo processing and add it to even better hardware fundamentals. Shots are crisp with great detail, and some close-up shots have just unreal levels of fine detail in lines. In situations where the smartphone-sized sensor simply can't work out a scene you get some high ISO noise that looks totally normal and expected — not over-processed and gross. Colors are just punchy enough to grab your eye without being crazy. And best of all, shot-to-shot consistency is fantastic. I don't think I took a single photo that was "bad" — I either took "good" or "great" photos.

Portrait Mode

The perfect example of Google flexing its software processing muscle is the inclusion of a Portrait Mode even though it only has a single camera. The camera uses the distance between individual pixels on the sensor to determine depth, then defines the foreground and background in software and applies a background blur in the final photo. Like all of these modes from other companies Google's isn't perfect, but shockingly it's just as good as the rest — and in many cases I found it to even be better.

Portrait Mode still struggles with stray hairs on people's heads, and sometimes with extra accouterments like glasses or big over-ear headphones. But I didn't find that it had issues with inanimate objects that have solid, straight lines on their sides like I sometimes saw on the Galaxy Note 8. Portrait Mode simply won't activate if the software thinks that it can't apply the effect properly on the subject, and in any case gives you a "standard" photo alongside your portrait shot.

Perhaps the most impressive part of Google's Portrait Mode system is that it also works extremely well on the front-facing 8MP f/2.4 camera. The effect can sometimes feel a bit overboard, but its edge detection is still top notch on the front-facer. The extra processing leads to really stand-out selfies — some of the best I've taken with a phone.

Video

Alex does a fantastic job actually illustrating how well the new Pixel 2 and 2 XL do with their video mode in our video review. In short, the addition of OIS to Google's already fantastic EIS (electronic image stabilization) produces great results. The video is so stable it seems impossible that it's coming from a phone with no extra stabilizing hardware assistance.

This year's Pixels seem to be a bit better about letting some of the natural movement of your hand come across in the video, though, which is particularly noticeable when walking and panning the camera. It means that the video remains stable, but doesn't look so artificially stabilized that it bothers your eyes. The Pixel 2 and 2 XL may not have all of the crazy video capabilities of the LG V30 when it comes to tweaking and utilizing specific effects, but for simple "point and shoot" videography it's amazing.

Google does it again

Pixel 2 and 2 XL Bottom line

Google has, once again, made the best pair of Android phones you can buy today. If someone has at least $649 to spend, knowing nothing else about what they want from a phone, I will be able to recommend they buy a Pixel 2 and have no worries about them enjoying the experience.

In either phone, you get hardware that's well-built and beautiful with all of the requisite specs and base hardware features, paired with an unrivaled software and user experience that you'll enjoy every day. You're also getting a smartphone that's likely to produce the best photos you've ever seen come out of a phone, in just about any situation you put it in. Then you get the smaller things you only notice over time — very strong battery life, loud stereo speakers, IP67 water resistance, software that's well hedged against slowdowns over time, and three years of guaranteed updates.

Google has, once again, made the best pair of Android phones you can buy today.

The Pixel 2 XL's display quality is objectively not good enough to match its $849 starting price, but the smaller Pixel 2's is more than good enough for $649. The lack of a headphone jack is troubling for many, myself included. And the software doesn't have the massive number of specialized features you'll find on other phones.

But those few cons are washed away in just a couple of hours of actually using either phone; and that excellent experience will stay strong for months — and even years — to come. Google has outdone itself this year. It has made the phones that everyone should be considering, even if its sales will end up being tiny in comparison to the big names.

The only question, really, is which size you should buy. The Pixel 2 XL is probably too expensive for many people, and its 6-inch display may actually be too big as well. The Pixel 2, with a very attainable price, offers excellent value for the money — it also has a better display and more manageable size. Unless you feel like you need the extra screen size or battery of the 2 XL, pick the Pixel 2. You'll love it.

4.5 out of 5

Andrew was an Executive Editor, U.S. at Android Central between 2012 and 2020.