Google's making phones more accessible to people with speech and hearing impairments

- Live Caption offers on-device live captioning for videos of all kind.

- Live Relay allows people with speech disabilities to have phone calls using the Google Assistant.

- Project Euphonia from Google AI is being created to help people with speech impairments to communicate faster and easier than ever before.

It can be really easy to take our phones for granted. We use them day in and day out without giving them much thought, but for people with physical handicaps/disabilities, even the simplest of tasks can prove to be daunting. At Google I/O 2019, Google announced three new projects it's working on to change this — Live Caption, Live Relay, and Project Euphonia.

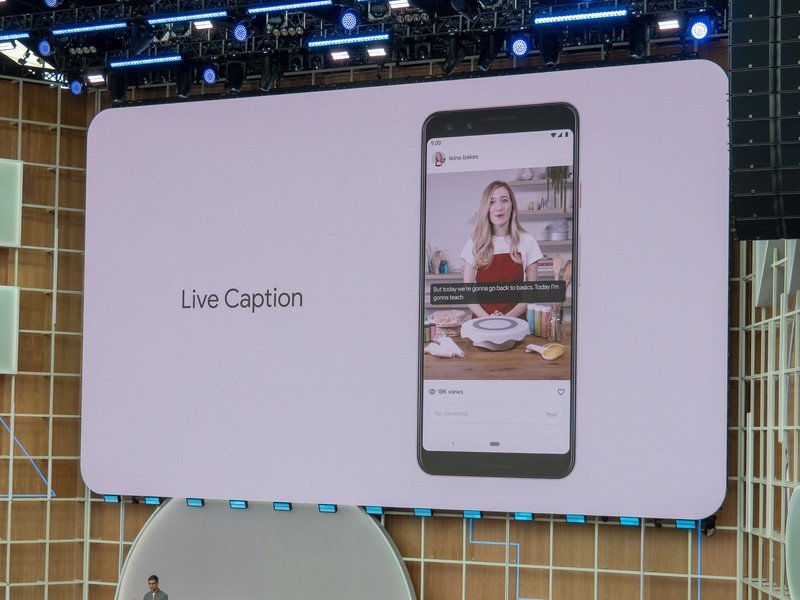

Live Caption

Closed captions are readily available on tools like Netflix and YouTube for making videos more accessible to people that are deaf or head-of-hearing, but there are some problems with captions in their current stage. Not every video or service supports them and they often require a data connection of some sort.

With Live Caption, Google's created a system that offers local on-device captioning in real-time for media of all kinds. Whether you're watching a YouTube video, a video you recorded yourself, or literally anything else, Live Caption transcribes words on the fly with no data connection of any kind required.

We don't have an exact release date, but Google says it's launching "later this year."

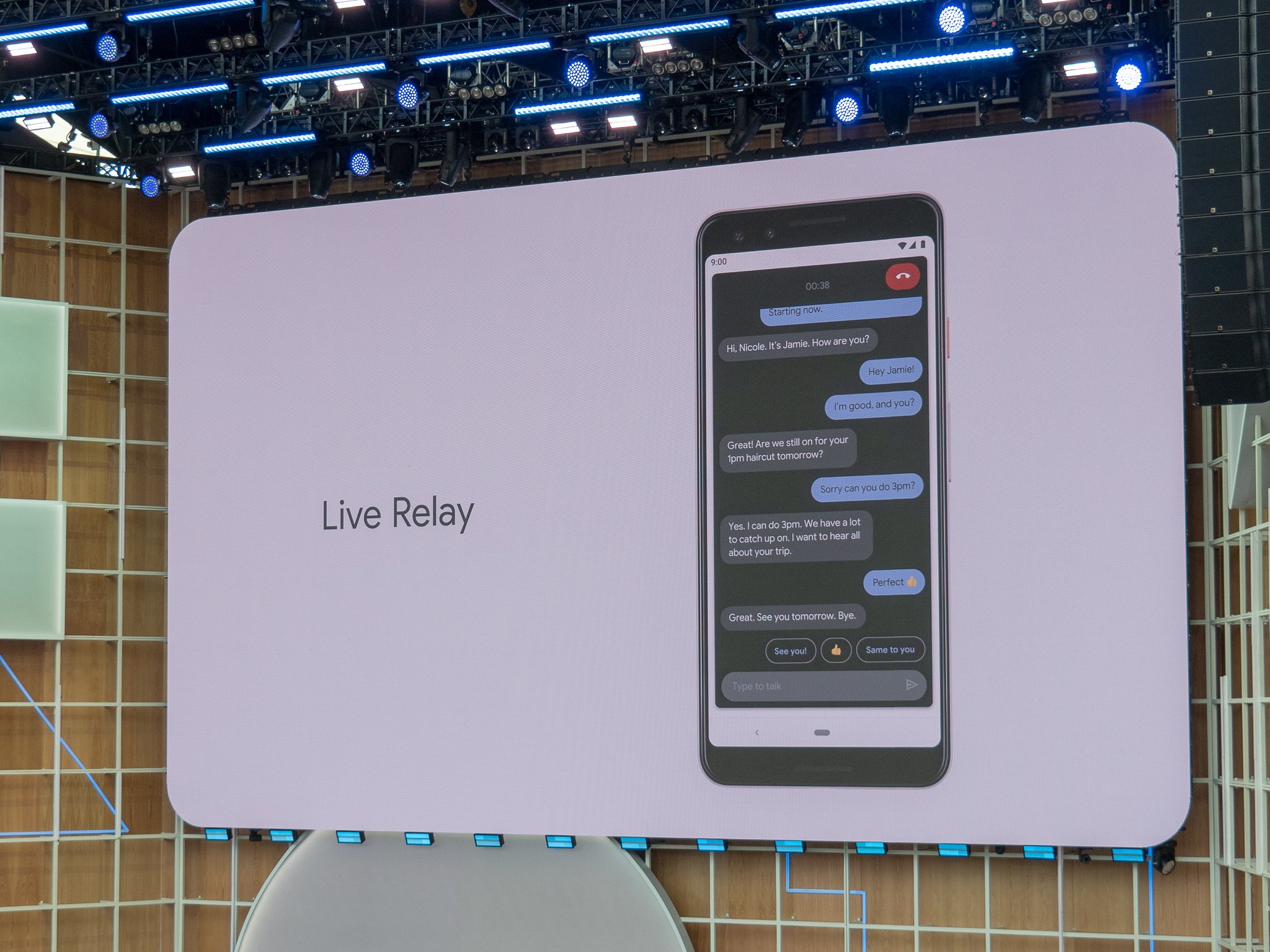

Live Relay

On the flip side of things, Live Relay was designed to help people take phone calls even if they have difficulty speaking.

If someone answers a call using Live Relay, the person on the other end of the call will be told that person is using the Live Relay service from Google that's powered by the Assistant — very similar to how Google Duplex works.

Everything the person on the other end says is transcribed in real-time on the Live Relay user's screen, and to reply, you can type custom messages on your keyboard or use Smart Reply and Smart Compose to quickly send off pre-made messages based on what the person just said.

Get the latest news from Android Central, your trusted companion in the world of Android

Just like Live Caption, Live Relay is run locally on your phone and doesn't require data or Wi-Fi of any kind in order to work.

Live Relay is still in a "research phase", but we're hoping it launches sooner rather than later.

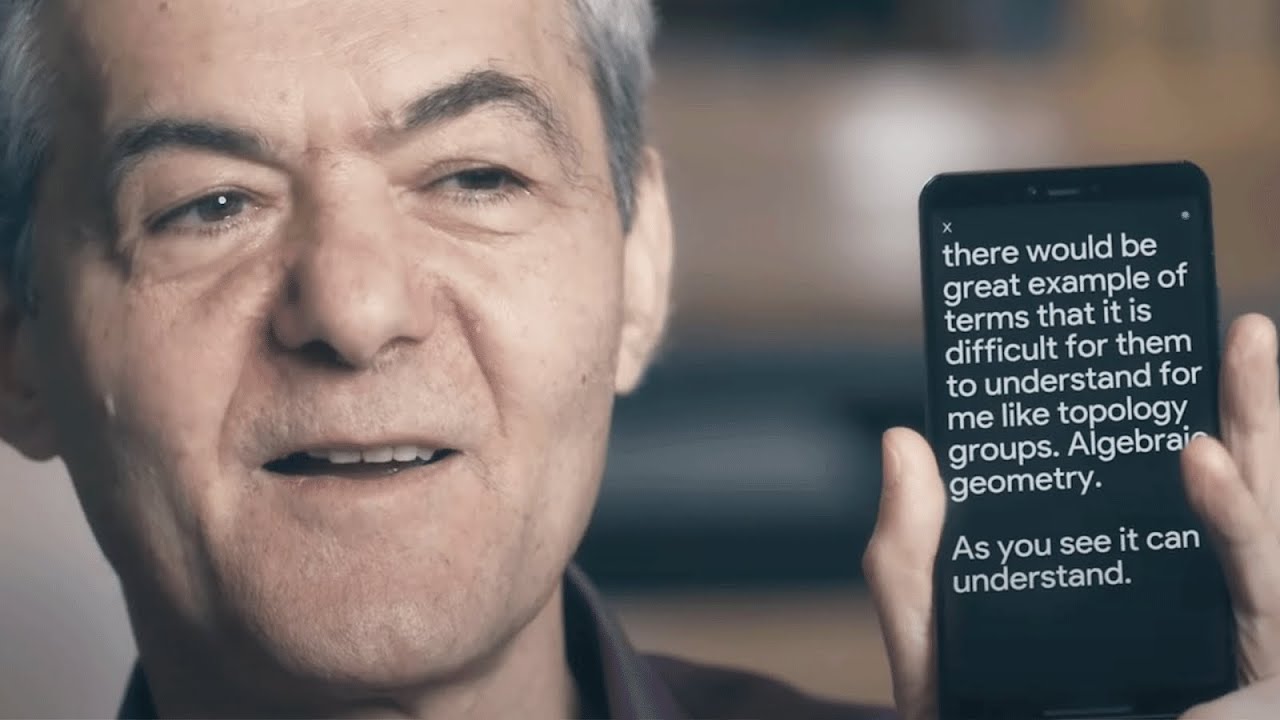

Project Euphonia

Last but certainly not least, Project Euphoria is being created to help those with ALS, sclerosis, or other speech impediments to communicate easier than ever before.

Per Google:

To do this, Google software turns the recorded voice samples into a spectrogram, or a visual representation of the sound. The computer then uses common transcribed spectrograms to "train" the system to better recognize this less common type of speech. Our AI algorithms currently aim to accommodate individuals who speak English and have impairments typically associated with ALS, but we believe that our research can be applied to larger groups of people and to different speech impairments.In addition to improving speech recognition, we are also training personalized AI algorithms to detect sounds or gestures, and then take actions such as generating spoken commands to Google Home or sending text messages. This may be particularly helpful to people who are severely disabled and cannot speak.

Joe Maring was a Senior Editor for Android Central between 2017 and 2021. You can reach him on Twitter at @JoeMaring1.