Google is building deep neural networks to help improve its search engine

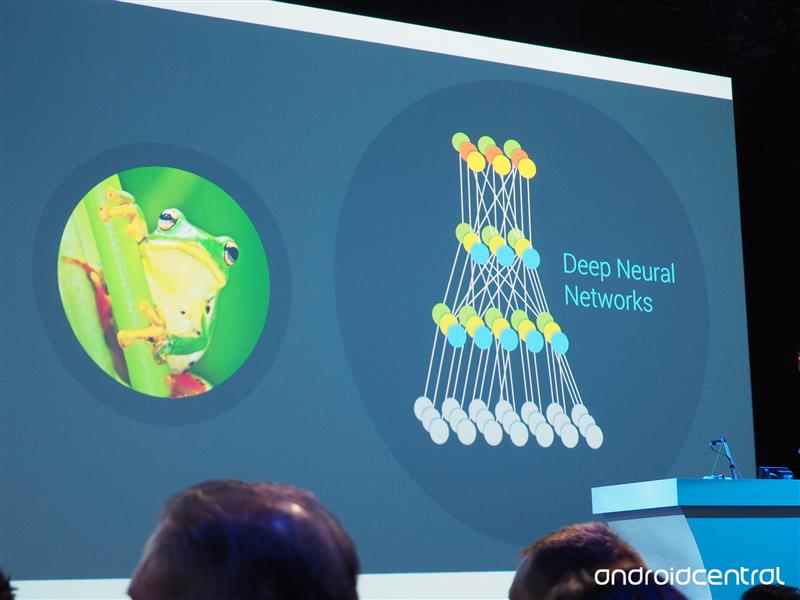

During the Google I/O keynote address today in San Francisco, Google says it has been busy building deep neural networks that learn and help the company improve its search engine. Google says its neural nets are 30 layers deep, and those layers recognize progressively more complicated things. For example, they can figure out specific shapes and colors

The results are already generating results. Google says its word error rate has dropped from 23% to just 8% in one year. The neural nets can help with other services. For example, the Inbox email app pulls in information based on a trip to London. Also, Google Now can let users know when to leave early for work based on traffic patterns.

Stay tuned as we will continue to post updates from today's Google I/O keynote event.

Get the latest news from Android Central, your trusted companion in the world of Android