Here's how the Pixel 4's Soli radar works and why Motion Sense has so much potential

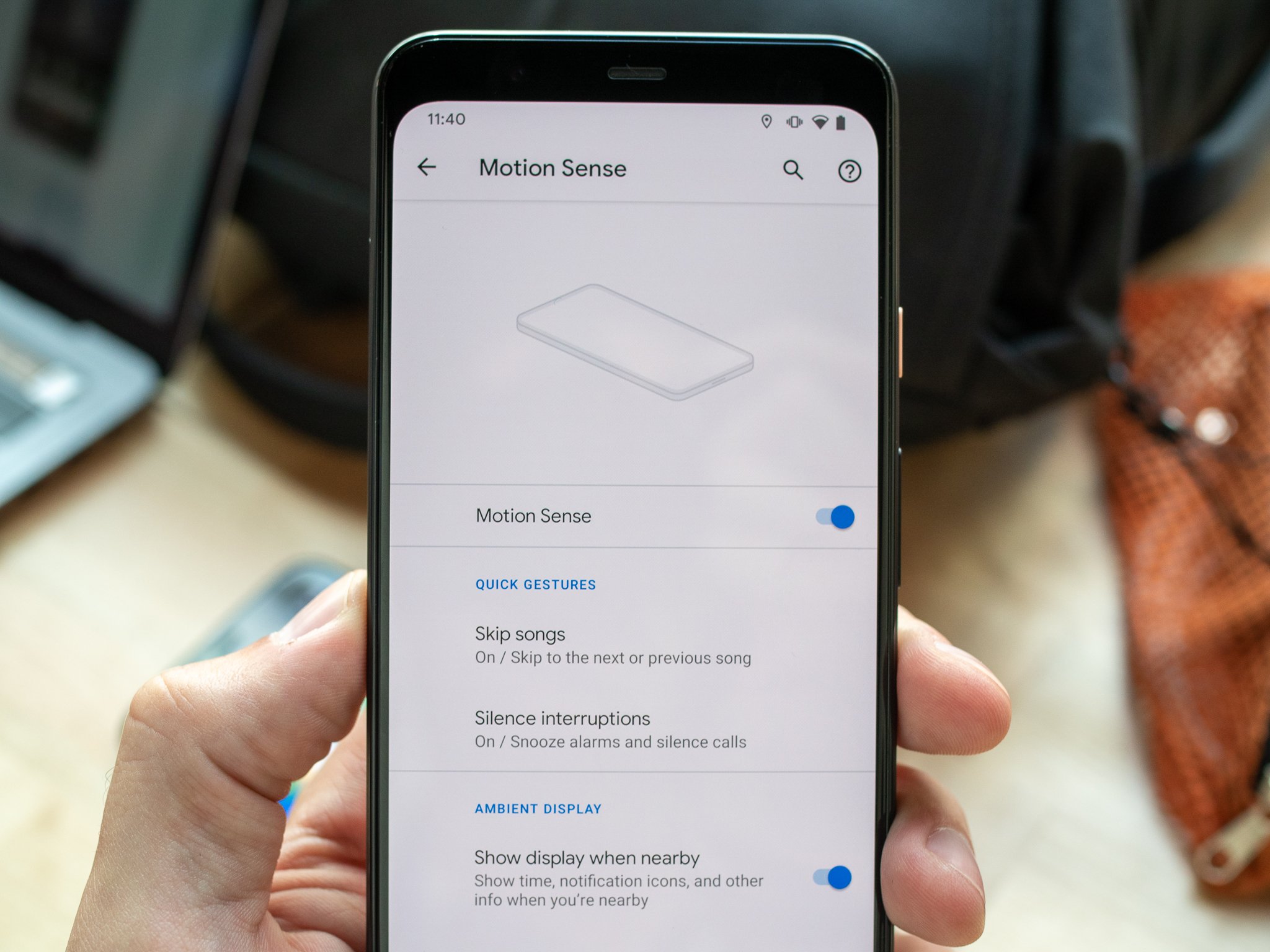

Google's Project Soli chip powers Motion Sense on the Pixel 4, and we expect to see it branch out into some other functions and features as more time goes by. With the arrival of the Pixel 4 we've seen a lot of talk about what it can do, but not as much about how it does it.

It only seems like magic.

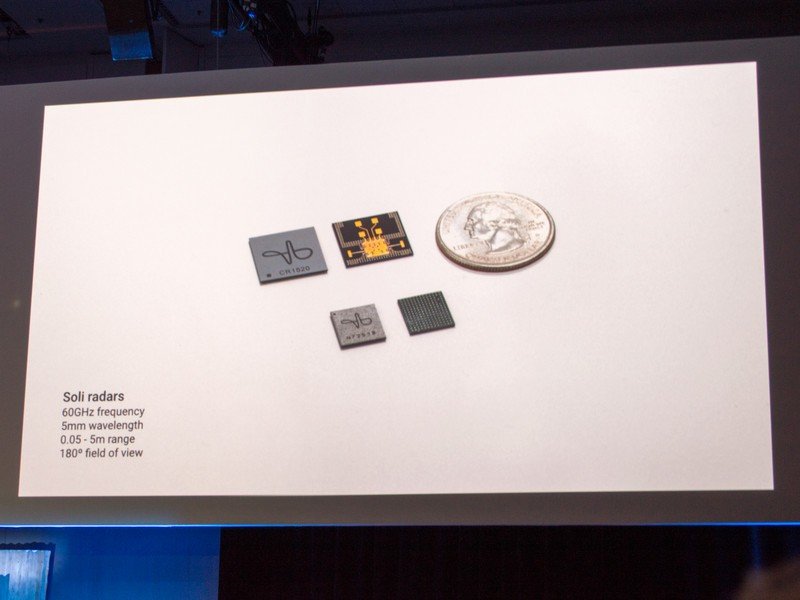

Soli is actually simpler than you might think. The science behind it can look and feel a bit like magic, but Soli uses proven methods to capture fine motor movement. The biggest hurdle was getting everything packed into a small and power-friendly state. In a nutshell, Soli uses millimeter-wave radar to detect motion at the micron — one-millionth of a meter — level and pass that data off to software.

You might have heard the term millimeter wave before when people are talking about 5G. Millimeter-wave is simply a term used to describe signals longer than infrared waves but shorter than radio waves or microwaves. Soli isn't going to interfere with 5G or meteorology, but Google did need FCC approval for its use (which is why it's only available in a few countries).

More: Will 5G impact weather forecasting? A UN conference aims to find out

Radar is a system that can detect objects using radio waves. It can detect things like the range (distance), angle, and speed of anything in its path. We're familiar with how radar is used to detect rain, but radar is good at detecting anything solid.

Sending a signal is one thing, but to get the juicy data you need to add an extra layer through modulation.

Soli has both a transmitter and a receiver on its chip. The transmitter sends out a modulated radio wave. That means a second signal is combined with a "normal" radio wave that contains extra data. When this wave hits an object, it scatters in many different directions, including right back at the Soli chip's receiver.

Because the original radio wave was modulated with extra data, the delay, frequency shift (also known as a Doppler shift or change in frequency) and amplitude attenuation (a reduction in the maximum amount of energy that was sent) between the original signal and the signal that was reflected back can be measured to give data about what it is "seeing."

Get the latest news from Android Central, your trusted companion in the world of Android

The data collected can tell a lot about what is in the path of the radio wave. Soli can determine the distance, speed, size, shape, smoothness and more through measuring these reflected waves. It can even determine what an object is made of and the exact angle it is orientated in.

As long as the data from Soli is kept in a consistent form, software can use it the same way data from a finger tap or swipe is used.

This data is a gold mine when it comes to wireless and touchless input. While Soli has all the data about the shape and size of an object it sees, the more important set of data is the motion, range, and velocity. Since Soli can measure differences as little as a micron, it's very precise and can accurately detect and track even the tiniest motions of a hand.

This data is then handed over to software. As long as the data maintains a consistent pattern, it's used the same way data like tapping on a touch screen can be. With this data software like a music player can be interacted with, or a more complex feature like Face Unlock can be initiated.

Soli is capable of a lot more that we've seen so far. Using it to make Face Unlock quick and seamless is pretty cool, but more importantly, it means that a Soli chip is present to be used for other things once a phone is unlocked and used. If Google were to create a public API that lets app developers use its capabilities, Soli could do just about anything our fingers can.

Jerry is an amateur woodworker and struggling shade tree mechanic. There's nothing he can't take apart, but many things he can't reassemble. You'll find him writing and speaking his loud opinion on Android Central and occasionally on Threads.