Is Gemini's expanded context window as useful as we thought?

New studies call Gemini 1.5 Pro and 1.5 Flash's capabilities into question

What you need to know

- Artificial intelligence models have an accuracy problem, but models that can process documents and information are supposedly more trustworthy.

- Gemini 1.5 Pro and Gemini 1.5 Flash, two of Google's best AI models, have expanded context windows that allow it to process and analyze more data.

- However, two new studies found that Gemini isn't that great at analyzing data.

Google's latest artificial intelligence models can accept more context data than any other mainstream solution available, but new studies are calling its effectiveness into question. As reported by TechCrunch, while Gemini 1.5 Pro and Gemini 1.5 Flash can technically process data in large context windows, it may not be able to understand it.

One study found that a "diverse set of [vision language models] VLMs rapidly lose performance as the visual context length grows," including Gemini. Another study revealed that "no open-weight model performs above random chance."

“While models like Gemini 1.5 Pro can technically process long contexts, we have seen many cases indicating that the models don’t actually ‘understand’ the content,” explained Marzena Karpinska, a postdoc at UMass Amherst's natural language processing group and one of the study's co-authors, to TechCrunch.

Large language models are informed by training data that allow them to answer certain questions without any additional materials. However, a key function of AI models is the ability to process new data in order to process prompts. For example, Gemini could use a PDF, a video, or an Android phone screen to gain additional context. All this data, plus its inbuilt data set, could be used to answer prompts.

A context window is a metric that quantifies how much new data an LLM can process. Gemini 1.5 Pro and Gemini 1.5 Flash have some of the widest context windows of any AI models on the market. The standard version of Gemini 1.5 Pro started out with a 128,000 token context window, with some developers being able to access a 1 million token context window.

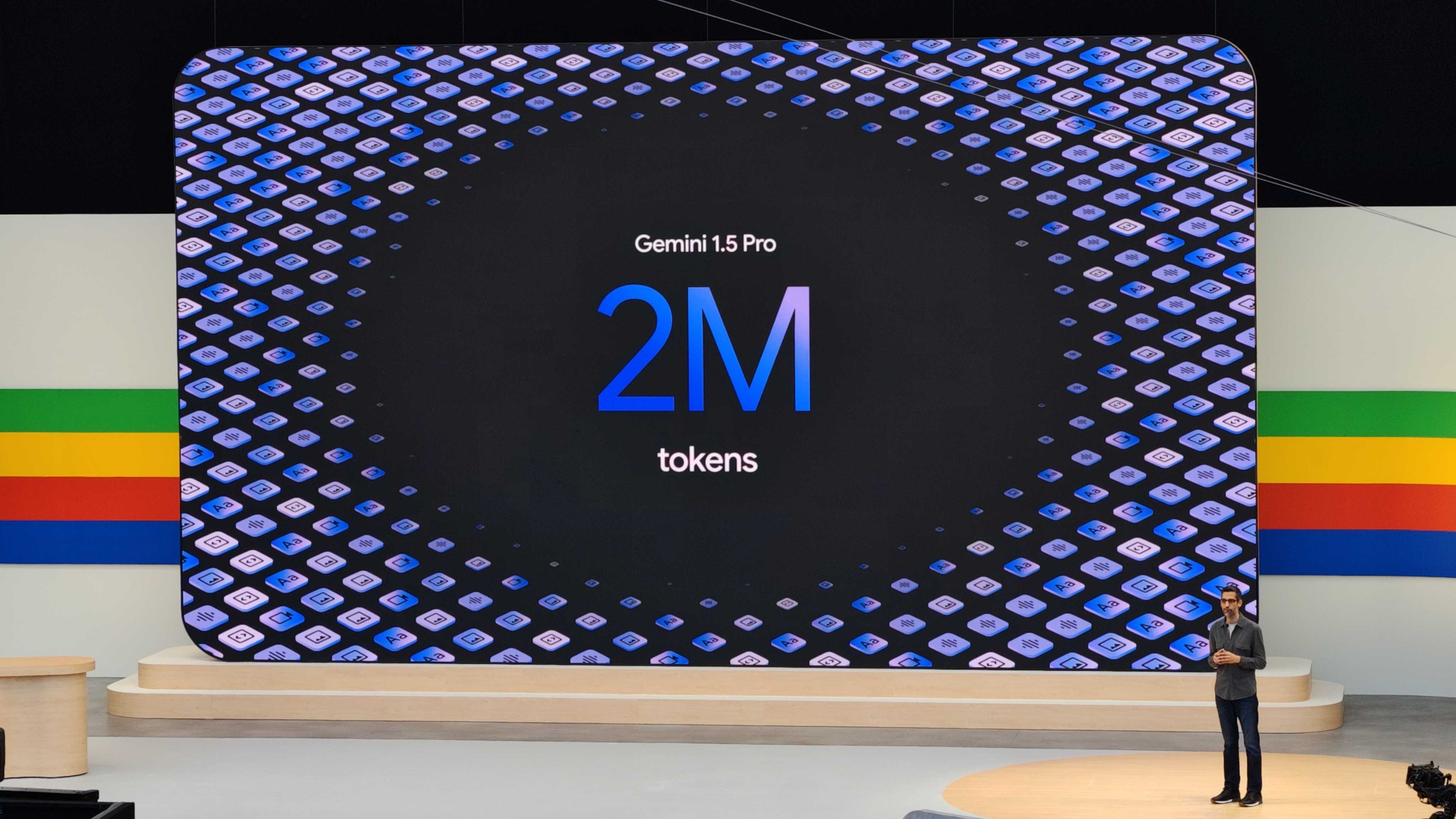

Then, at Google I/O 2024, the company revealed that Gemini 1.5 Pro and 1.5 Flash would be widely available with the larger 1 million token context window. For select Google AI Studio and Vertex AI developers, Gemini 1.5 Pro was available with a 2 million token context window. For reference, a "token" is a small part of the data. Google says that its 2 million token context window equates to roughly two hours of video, 22 hours of audio, or 1.4 million words.

Google showed off these expanded context windows in pre-recorded demos. However, now that the Gemini 1.5 Pro model is in the hands of researchers, we're starting to learn its limitations.

Get the latest news from Android Central, your trusted companion in the world of Android

What the studies found

Karpinska and the study's other co-authors noticed that most LLMs perform fine in "needle-in-the-haystack" tests. These situations require the AI models to simply find and retrieve information that may be scattered throughout source material. However, the information is usually confined to a sentence or two. When an AI model is tasked with processing information as part of a large context window and understanding it wholly, there is much more room for error.

NoCha was created to test the performance of Gemini and other AI models in these situations. The researchers describe NoCha as "a dataset of 1,001 minimally different pairs of true and false claims about 67 recently-published English fictional books, written by human readers of those books." The recency of these books is important, as the point of the test is to evaluate the models' ability to process new information — not information learned through past training materials.

"In contrast to existing long-context benchmarks, our annotators confirm that the largest share of pairs in NoCha requires global reasoning over the entire book to verify," the researchers write. "Our experiments show that while human readers easily perform this task, it is enormously challenging for all ten long-context LLMs that we evaluate: no open-weight model performs above random chance (despite their strong performance on synthetic benchmarks), while GPT-4o achieves the highest accuracy at 55.8%."

🪄 None of the LLMs perform well on NoCha (but humans do! 🏆), with all open-weights models performing below random 😱Though #Claude-3.5-Sonnet reportedly shines in other areas, it lags behind #GPT-4o, #Claude-3-Opus, and #Gemini Pro 1.5 on NoCha. pic.twitter.com/5LF03DsseXJune 25, 2024

Specifically, Gemini 1.5 Pro scored 48.1% on the test. Gemini 1.5 Flash came in behind at 34.2%. Both of Google's AI models performed worse than the best OpenAI and Claude models despite Gemini's context window advantage. In other words, it would have been better to guess than to use Gemini 1.5 Pro or Gemini 1.5 Flash's answers to the NoCha test.

It's important to note that humans scored 97% on the NoCha test, which blows any of the LLMs tested out of the water.

What does this mean for Gemini?

Gemini seemed to perform below expectations, but it isn't completely useless. The models are still useful for scanning through large swaths of data and finding the answer to a specific question, especially when it is confined to a single sentence. However, it won't help you find themes, plots, or general conclusions that require it to consume and understand data on a large scale.

Due to the lofty claims Google had for Gemini, this may be disappointing. Android Central reached out to Google about these studies outside of normal working hours, and they did not get back to us in time for publication. We will update the article once we have more information.

For now, these studies just go to show that artificial intelligence — as impressive as it may seem — still has a long way to go before it can usurp human reasoning. AI models like Gemini might be quicker at processing data than humans, but they are rarely more accurate.

Brady is a tech journalist for Android Central, with a focus on news, phones, tablets, audio, wearables, and software. He has spent the last three years reporting and commenting on all things related to consumer technology for various publications. Brady graduated from St. John's University with a bachelor's degree in journalism. His work has been published in XDA, Android Police, Tech Advisor, iMore, Screen Rant, and Android Headlines. When he isn't experimenting with the latest tech, you can find Brady running or watching Big East basketball.

You must confirm your public display name before commenting

Please logout and then login again, you will then be prompted to enter your display name.