Hand tracking on Oculus Quest is absolutely brilliant

Updated May 18, 2020: Hand tracking is now generally avaialble. The Oculus Store will start accepting titles with hand tracking on May 28, 2020. You can read more about hand tracking here.

I'm standing in the middle of a magical laboratory, complete with glowing rocks and bubbling coundrons, and all I have to do is stand still and not poke the eyeball. The disembodied voice was very clear about not touching the eyeball, but it's right there you know? It's looking at me, sitting in the cavity of this chest like a lock. I give in to my curiosity, lean in, and extend my finger straight into the center of this giant eyeball. As it flinches, the top of a chest flings itself open and several other eyballs come flying out to cause a mess in the lab.

I have some cleaning up to do, but I won't be doing it with a controller. Like poking that eyeball, I'll be using my actual hands in VR. And I'll be doing it with no extra hardware at all, just my fingers and the Oculus Quest.

It is crazy how well this works

Oculus announced hand tracking during its keynote as a feature that was coming to Quest soon, but anyone at Facebook's conference this week can check out Elixir, a demo made specifically to show off this tech. It's a simple 10-minute experience, but what you get from it is beyond cool.

As soon as the headset is on, you can see your hands. As you rotate your wrists and touch your fingers together, the virtual hands in front of you do the same. In Elixir, you're encouraged to put your hand on pads to unlock things, pinch eye droppers to play with chemicals, and even grab wires to fix a broken circuit, and you do all of this with your actual fingers to spectacular effect.

As you continue to play through the demo, different chemicals spill onto your hands and cause different effects. At one point, I could close my fists and see Wolverine-style claws come out of my knuckles, or see rings of electricity form and dissipate. There's even a bit where you grab a pen floating in the air and sign a scroll floating in front of you. And it worked: My actual signature appeared on the page in front of me as my hand gripped an imaginary pen in the real world.

One thing you don't get from this, which daily Oculus users will immediately notice is missing, is any kind of feedback. Vibration is a huge part of the Oculus (or general video game) experience, and Oculus controllers are better than most at delivering on that feedback. It's downright weird to hot have feedback when there's green goo bubbling over your hands or when you reach out and grab something and see your hand catch fire in VR. It's not a bad thing, just a thing you're likely to immediately notice is different from other Oculus experiences.

Get the latest news from Android Central, your trusted companion in the world of Android

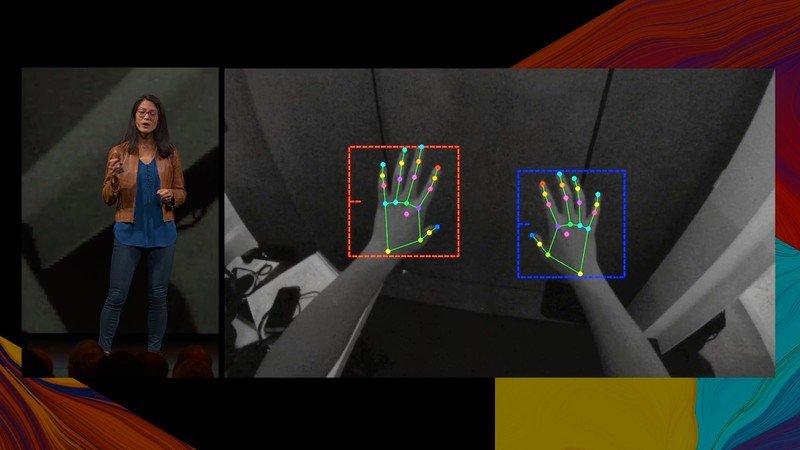

Getting better with time, and coming soon

As cool as this is, and I genuinely can't wait to try it again, there are some clear limitations based on how the tech works. Right now, hand tracking exists thanks to a combination of model-based tracking and deep neural networks to figure out where your hands are and what they are doing. That means, on some level, the computer sitting next to your head is making some educated guesses about what your hands are doing based on lots of pictures and videos of other hands. In most situations, I could throw at it in my limited time with the experience, it was great.

You're able to do some REALLY cool things when you can use your actual fingers so I don't think the lack of haptics is going to be a big deal for most folks.

But occasionally I noticed when I pressed by thumb and ring finger together in the real world, I wouldn't actually see my fingers touch in VR. Took me a second to figure out why, but it's because most of the time my middle finger was in the way and the headset couldn't actually see my whole finger. Something similar happened when I tried to put my hands together, to clap or something. Instead of risking getting it super wrong, the hands would just disappear for a moment and wait until two clear hands could be identified again.

The range on this feature right now is also fairly limited compared to the reach you get with a controller in your hand. The cameras on Quest can reliably track you hands in roughly a three and a half foot bubble in the front of the headset. If I tried to fully extend one of my arms, or if I tried to reach too far above my head, the hand would disappear entirely and wouldn't return until my real hand came back.

This features is obviously stil in its very early days, and it's likely hand tracking will continue to improve before it ships, but for the moment it's clear you're not going to have the same kind of range you get with physical controllers. However, you're able to do some REALLY cool things when you can use your actual fingers so I don't think it's going to be a big deal for most folks.

Oculus plans to ship hand tracking on Quest as a beta early next year, and start setting the stage for developers to submit their own experiences with hand tracking. When that happens, the most popular standalone VR headset on the planet will have a feature that works natively in more things than just about any other consumer-based hand tracking system available today.